Introduction

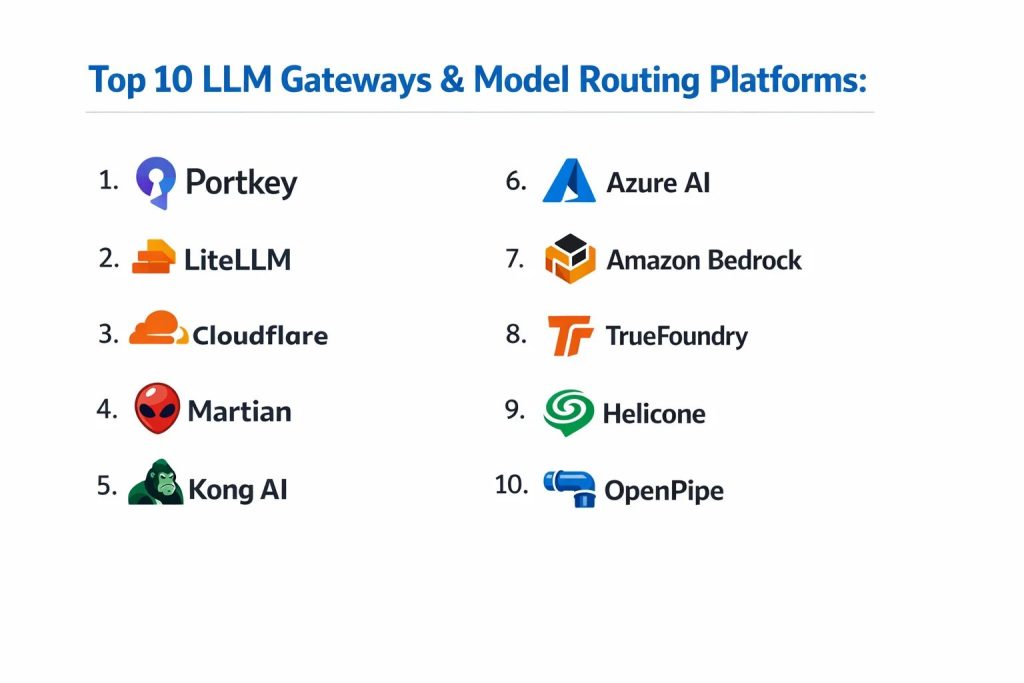

Large Language Model (LLM) gateways and model routing platforms represent the critical infrastructure layer for modern artificial intelligence deployments. As organizations move beyond simple experiments to production-grade agentic workflows, the need for a unified interface becomes paramount. These platforms act as an intelligent proxy between application code and a fragmented ecosystem of model providers. By abstracting away the specific requirements of individual APIs, gateways allow developers to treat diverse models as interchangeable commodities. This abstraction is essential for maintaining service availability, as it enables automated failover and load balancing across different regions and providers without requiring code changes.

The strategic importance of these tools lies in their ability to provide centralized governance, cost control, and performance optimization. In a corporate environment, unmanaged access to various AI providers can lead to significant security risks and unpredictable expenses. LLM gateways solve these challenges by enforcing unified authentication, implementing granular rate limits, and providing detailed observability into every token consumed. Furthermore, advanced routing platforms use real-time logic to direct queries to the most cost-effective or highest-performing model based on the specific intent of the prompt. For any enterprise aiming to build reliable, compliant, and scalable AI systems, integrating a robust gateway is no longer an option—it is a foundational requirement.

Best for: Enterprise AI architects, DevOps teams managing high-throughput LLM applications, and organizations requiring strict data residency and security compliance across multiple AI providers.

Not ideal for: Individual hobbyists using a single model for non-production tasks or simple projects where the overhead of managing a proxy outweighs the benefits of model flexibility.

Key Trends in LLM Gateways & Model Routing Platforms

The primary shift in the industry is toward semantic routing, where the gateway understands the context of a request and automatically chooses the best-fit model, such as routing complex reasoning to a high-end model while sending simple summaries to a faster, cheaper alternative. We are also seeing a massive push for “Zero Data Retention” (ZDR) pathways, allowing sensitive industries like finance and healthcare to use public models without their data being stored for training. Real-time guardrails are becoming standard, with gateways now capable of scanning for PII, prompt injections, and toxic outputs at the wire level, preventing harmful content from ever reaching the end-user or the model.

Another significant trend is the rise of edge-based gateways that minimize latency by processing routing logic at the network’s perimeter, close to the user. This is coupled with the emergence of unified billing systems, which aggregate costs from dozens of providers into a single enterprise invoice. Finally, the integration of OpenTelemetry and advanced distributed tracing is allowing teams to visualize the entire lifecycle of an AI request, from the initial prompt to the final token, providing the same level of observability that has long been available in traditional microservices architectures.

How We Selected These Tools

The selection process for these top LLM gateways involved a rigorous evaluation of their production readiness and technical depth. We prioritized platforms that offer a unified API compatible with industry standards, ensuring that teams can switch models with zero code modifications. Market reliability was a major factor, with preference given to tools that demonstrate sub-millisecond overhead and high availability under heavy loads. We also scrutinized the security frameworks of each platform, looking for robust features like role-based access control, secret management, and compliance with global data protection standards.

Integration capabilities were another key metric, as a gateway must sit comfortably within existing CI/CD and monitoring pipelines. We looked for tools that provide native support for a wide array of providers, including both proprietary cloud models and self-hosted open-source variants. Furthermore, we assessed the quality of observability features, such as real-time token tracking and cost attribution per team or project. Finally, the balance between open-source flexibility and managed service convenience was considered to ensure the list serves both high-control engineering teams and speed-oriented enterprise developers.

1. Portkey

Portkey is an enterprise-grade AI gateway designed to streamline the management of hundreds of LLMs through a single, unified interface. It is built for teams that require deep observability and production-level reliability, offering a robust control plane for monitoring and securing AI interactions.

Key Features

The platform features a multi-provider gateway that supports over 1,000 models with a unified API. It includes advanced semantic caching that stores similar requests to reduce costs and latency. The tool provides a visual prompt manager that allows teams to iterate on prompts without deploying new code. It also features automatic fallbacks and retries, ensuring that if one provider goes down, the request is instantly rerouted. Additionally, it offers detailed traces and logs for every request, providing complete visibility into performance and spend.

Pros

It offers an incredibly fast and reliable proxy layer with deep integration for popular frameworks. The cost-tracking features are highly granular, allowing for precise budget management across different departments.

Cons

The platform can be complex to configure for very simple use cases. Some of the most advanced governance features are reserved for higher enterprise tiers.

Platforms and Deployment

Cloud-hosted SaaS and self-hostable via Docker/NPX.

Security and Compliance

Supports SSO, RBAC, and integrates with major secret managers like Vault and AWS Secrets Manager.

Integrations and Ecosystem

Directly integrates with OpenAI, Anthropic, Azure, and Google Vertex AI, while providing SDKs for Python and JavaScript.

Support and Community

Offers dedicated enterprise support and has an active community of professional AI engineers.

2. LiteLLM

LiteLLM has become the preferred open-source standard for developers looking to normalize the fragmented LLM API landscape. It operates as a lightweight reverse proxy that translates various provider schemas into a single, OpenAI-compatible format.

Key Features

The software provides a universal API that maps headers and message structures across 100+ providers. It includes a built-in load balancer that can distribute traffic based on custom weights or performance metrics. The platform features virtual API keys that allow admins to set per-user or per-team spend limits and rate limits. It also supports traffic mirroring, which is essential for testing new models with production data without affecting the end-user. Detailed usage tracking and cost attribution are handled through a simple dashboard or a connected database.

Pros

Being open-source, it offers maximum control and is ideal for organizations that need to audit the codebase for security. It is remarkably easy to set up for local development and small-scale production.

Cons

Managing a high-throughput instance in production requires significant operational overhead for scaling and state management. The free version lacks advanced enterprise features like native SSO.

Platforms and Deployment

Self-hosted via Python, Docker, or Kubernetes; also available as a managed proxy.

Security and Compliance

Supports secure key storage and provides mechanisms for data anonymization and prompt hygiene.

Integrations and Ecosystem

Compatible with any tool that uses the OpenAI SDK, including LangChain and LlamaIndex.

Support and Community

Boasts a massive GitHub community and professional support for enterprise customers.

3. Cloudflare AI Gateway

Cloudflare AI Gateway leverages its global edge network to provide a highly performant proxy layer for LLM traffic. It is designed to offer speed, security, and observability for applications running at the network perimeter.

Key Features

The gateway uses Cloudflare’s global infrastructure to cache responses close to the user, significantly reducing latency. It includes real-time rate limiting to prevent API abuse and protect backend quotas. The platform features a visual routing engine that allows users to split traffic between models for A/B testing or gradual rollouts. It provides centralized logging and analytics that give a clear view of token usage and costs across multiple providers. Additionally, it integrates with Cloudflare’s wider security stack for PII detection and content moderation.

Pros

It is incredibly easy for existing Cloudflare users to adopt, requiring only a simple endpoint change. The global edge caching provides a performance boost that is hard to match with centralized solutions.

Cons

The platform is tightly coupled with the Cloudflare ecosystem, which may lead to vendor lock-in. Logging and events are subject to tiered limits that can be reached quickly in high-volume environments.

Platforms and Deployment

Managed SaaS on Cloudflare’s edge network.

Security and Compliance

Inherits Cloudflare’s enterprise-grade security, including DLP integration and GDPR compliance.

Integrations and Ecosystem

Works seamlessly with Cloudflare Workers and supports major providers like OpenAI, Anthropic, and Google.

Support and Community

Backed by Cloudflare’s global support organization and extensive documentation.

4. Martian

Martian is a specialized model routing platform that focuses on optimizing the trade-off between model performance and cost. It uses advanced predictive logic to determine the most efficient model for every individual prompt in real-time.

Key Features

The core technology is a “model router” that predicts how a model will perform on a specific prompt without actually running it. It automatically selects the cheapest model capable of meeting a user’s quality requirements. The platform provides a single endpoint that gives access to a combined pool of leading LLMs. It features custom tuning for the routing logic, allowing organizations to prioritize either extreme quality or maximum cost savings. The system also aggregates outputs to provide higher accuracy than any single model could achieve on its own.

Pros

It consistently delivers significant cost savings for high-volume applications by avoiding the use of expensive models for simple tasks. The integration is seamless, often requiring only a change to the API key and base URL.

Cons

The routing logic itself is proprietary, which may be a concern for teams requiring total transparency. It is primarily a routing tool and may lack some of the broader management features of a full gateway.

Platforms and Deployment

Managed SaaS platform.

Security and Compliance

Handles API keys securely and provides standard enterprise-level data protection.

Integrations and Ecosystem

Connects to all major LLM providers and is designed to work with any application using a standard chat-completion interface.

Support and Community

Offers direct support for enterprise clients and has been adopted by engineering teams at major tech companies.

5. Kong AI Gateway

Kong AI Gateway extends the world’s most popular API management platform into the world of generative AI. It is designed for large enterprises that want to govern their LLM traffic using the same tools they use for their traditional microservices.

Key Features

The software utilizes a plugin-based architecture to add AI-specific features like prompt validation and PII masking. It supports multi-provider routing with automatic failover and load balancing across different cloud regions. It includes advanced rate limiting that is specifically designed to handle tokens and request quotas. The platform provides a centralized control plane for managing API keys and access policies across the entire organization. It also features native support for agentic workflows and Model Context Protocol (MCP) servers.

Pros

It is the perfect choice for organizations already using Kong, as it integrates directly into their existing infrastructure. The security and governance features are world-class and proven at the enterprise scale.

Cons

The operational complexity can be high for teams not familiar with the Kong ecosystem. It may feel heavy for standalone AI projects that don’t need a full API management suite.

Platforms and Deployment

Multi-cloud, hybrid, and on-premises via Docker or Kubernetes.

Security and Compliance

Offers robust RBAC, audit logging, and is compliant with the strictest global enterprise standards.

Integrations and Ecosystem

Integrates with all major LLMs and supports hundreds of existing Kong plugins for security and monitoring.

Support and Community

Provides top-tier enterprise SLAs and has a vast global community of API developers.

6. Azure AI Gateway

Azure AI Gateway is a native solution within the Microsoft ecosystem, designed to provide a secure and scalable entry point for all AI services. It is deeply integrated with Azure API Management, offering a unified way to govern both traditional and AI-driven APIs.

Key Features

The platform features a unified AI gateway pattern that simplifies the management of various Azure OpenAI and other model deployments. It includes built-in content safety tools that scan for harmful prompts and responses in real-time. The system provides detailed metrics and dashboards integrated directly into the Azure portal for cost and performance tracking. It supports high-availability configurations with automated load balancing across global Azure regions. It also offers advanced prompt shields to protect against injection attacks and jailbreaks.

Pros

It is the most secure and compliant option for organizations already invested in the Microsoft cloud. The integration with Azure’s identity and security tools is seamless and highly automated.

Cons

It is primarily focused on the Azure ecosystem, making it less ideal for true multi-cloud strategies that include non-Azure providers. The interface follows standard Azure complexity, which can be daunting for new users.

Platforms and Deployment

Managed service within the Azure cloud.

Security and Compliance

Meets all major global compliance standards including HIPAA, GDPR, and SOC2.

Integrations and Ecosystem

Deeply integrated with Azure OpenAI, Microsoft 365, and the broader Azure AI services suite.

Support and Community

Backed by Microsoft’s global enterprise support and an extensive network of certified partners.

7. Amazon Bedrock

Amazon Bedrock is a fully managed service that provides a unified API for accessing foundational models from AWS and leading AI startups. It is designed to offer a serverless experience for building and scaling generative AI applications.

Key Features

The service provides a single endpoint for models from Anthropic, Meta, Mistral, and Amazon’s own Titan family. It includes built-in guardrails for implementing responsible AI policies across all supported models. The platform features automated model evaluation tools that help teams choose the right model for their specific task. It supports secure, private connections to internal data for RAG workflows without exposing data to the public internet. It also offers a serverless architecture that scales automatically without the need for GPU management.

Pros

The unified API makes it incredibly simple to switch between models by just changing a model ID. It provides the strongest security guarantees for data privacy within the AWS environment.

Cons

The initial setup and permission management can be complex due to the broad nature of AWS IAM. It lacks some of the third-party model variety found in more open gateway platforms.

Platforms and Deployment

Managed serverless service on AWS.

Security and Compliance

Provides industry-leading security with data encryption at rest and in transit, and multiple compliance certifications.

Integrations and Ecosystem

Perfectly integrated with S3, Lambda, and the entire AWS data and machine learning stack.

Support and Community

Supported by AWS’s massive professional services team and a global community of cloud architects.

8. TrueFoundry

TrueFoundry provides a comprehensive LLM gateway that is part of a larger platform for hosting and managing machine learning models. It is designed for teams that need to bridge the gap between commercial APIs and their own self-hosted models.

Key Features

The gateway offers a unified API that handles authentication and routing for both external providers and internally hosted models. It includes a sophisticated RBAC system that allows administrators to manage access at a very granular level. The platform features a centralized dashboard for tracking token-level usage and costs across different teams and projects. It supports automated fallbacks and load balancing to ensure 99.9% uptime for AI applications. It also provides built-in caching and rate limiting to optimize performance and prevent budget overruns.

Pros

It is uniquely strong at managing a hybrid environment of public and private models. The platform is designed for rapid deployment, allowing teams to set up a secure gateway in minutes.

Cons

The full platform includes many features that may be unnecessary for teams only looking for a simple API proxy. It requires a subscription for the full managed experience.

Platforms and Deployment

Cloud-managed SaaS or deployed within a customer’s VPC (AWS, GCP, Azure).

Security and Compliance

Ensures data stays within the customer’s infrastructure when using VPC deployment, meeting strict residency requirements.

Integrations and Ecosystem

Compatible with all major LLM providers and integrates with standard Kubernetes-based ML stacks.

Support and Community

Provides dedicated enterprise support and comprehensive technical documentation.

9. Helicone

Helicone is an open-source observability and gateway platform specifically built for AI developers. It focuses on providing extreme visibility into every request, helping teams debug and optimize their LLM applications with ease.

Key Features

The platform features a one-line integration that turns any OpenAI SDK call into a proxied and logged request. It provides detailed analytics on latency, token usage, and exact costs with zero markup on provider fees. The tool includes a prompt management system that allows for rapid testing and versioning of prompts in a playground environment. It supports automatic fallbacks and retries across multiple providers to maintain service reliability. It also features semantic caching and custom property tracking to group logs by user or feature.

Pros

The “zero markup” pricing model makes it very attractive for budget-conscious startups. The observability dashboards are among the most intuitive and detailed in the industry.

Cons

As a primarily observability-focused tool, it may lack some of the deep enterprise governance features found in Kong or Azure. The community-supported version lacks formal SLAs.

Platforms and Deployment

Cloud-hosted SaaS and self-hostable open-source version.

Security and Compliance

Offers SAML SSO and supports data residency in both the US and EU.

Integrations and Ecosystem

Supports over 100 models and integrates directly with LiteLLM and OpenRouter.

Support and Community

Maintains an active Discord and GitHub community for real-time developer support.

10. OpenPipe

OpenPipe is a specialized platform that focuses on the intersection of model routing and data collection for fine-tuning. It acts as a gateway that not only routes requests but also captures high-quality data to help teams transition from expensive models to smaller, custom-trained ones.

Key Features

The software provides a proxy that records every request and response for use in future training datasets. It features a tool for comparing the performance of different models on specific production traffic. The platform includes an automated pipeline for fine-tuning open-source models based on the data collected through the gateway. It supports a unified API for easy switching between a “teacher” model (like GPT-4) and a “student” model. It also provides standard gateway features like rate limiting and basic observability.

Pros

It is the best tool for teams that have a long-term strategy of moving away from expensive proprietary models. The ability to automatically build training sets from production data is a massive time-saver.

Cons

Its focus is narrower than a general-purpose enterprise gateway, making it a specialized addition rather than a total solution for some. The data collection features require careful management of privacy.

Platforms and Deployment

Managed SaaS platform.

Security and Compliance

Includes tools for scrubbing sensitive data from collected logs before they are used for training.

Integrations and Ecosystem

Integrates with major LLM providers and specialized fine-tuning infrastructure.

Support and Community

Offers targeted technical support for teams focused on model optimization and fine-tuning.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. Portkey | Enterprise Governance | Cloud, Self-hosted | Hybrid | Semantic Caching | 4.8/5 |

| 2. LiteLLM | Open-source control | Win, Mac, Linux | Self-hosted | 100+ Provider Support | 4.9/5 |

| 3. Cloudflare | Edge Performance | Managed Edge | SaaS | Global Edge Network | 4.5/5 |

| 4. Martian | Cost Optimization | Cloud-only | SaaS | Predictive Routing | 4.6/5 |

| 5. Kong AI | Enterprise API Mgmt | Multi-cloud, On-prem | Hybrid | Plugin-based Security | 4.7/5 |

| 6. Azure AI | Microsoft Ecosystem | Azure Cloud | SaaS | Content Safety Shields | 4.4/5 |

| 7. Amazon Bedrock | AWS Ecosystem | AWS Cloud | SaaS | Serverless Model Access | 4.5/5 |

| 8. TrueFoundry | Hybrid/VPC Teams | Cloud, VPC | Hybrid | Unified RBAC Dashboard | 4.6/5 |

| 9. Helicone | Developer Observability | Cloud, Self-hosted | Hybrid | Zero Markup Pricing | 4.7/5 |

| 10. OpenPipe | Model Fine-tuning | Cloud-only | SaaS | Data Collection Engine | 4.3/5 |

Evaluation & Scoring of LLM Gateways

The scoring below is a comparative model intended to help shortlisting. Each criterion is scored from 1–10, then a weighted total from 0–10 is calculated using the weights listed. These are analyst estimates based on typical fit and common workflow requirements, not public ratings.

Weights:

- Core features – 25%

- Ease of use – 15%

- Integrations & ecosystem – 15%

- Security & compliance – 10%

- Performance & reliability – 10%

- Support & community – 10%

- Price / value – 15%

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| 1. Portkey | 10 | 8 | 10 | 9 | 9 | 9 | 8 | 9.15 |

| 2. LiteLLM | 9 | 7 | 10 | 8 | 9 | 8 | 10 | 8.70 |

| 3. Cloudflare | 8 | 9 | 8 | 9 | 10 | 9 | 7 | 8.45 |

| 4. Martian | 8 | 9 | 8 | 7 | 9 | 7 | 9 | 8.10 |

| 5. Kong AI | 10 | 5 | 9 | 10 | 8 | 10 | 6 | 8.25 |

| 6. Azure AI | 9 | 7 | 7 | 10 | 8 | 10 | 7 | 8.35 |

| 7. Amazon Bedrock | 9 | 8 | 7 | 10 | 9 | 10 | 8 | 8.70 |

| 8. TrueFoundry | 9 | 7 | 9 | 9 | 8 | 9 | 7 | 8.35 |

| 9. Helicone | 8 | 10 | 9 | 7 | 9 | 8 | 9 | 8.50 |

| 10. OpenPipe | 7 | 8 | 8 | 7 | 8 | 8 | 8 | 7.60 |

How to interpret the scores:

- Use the weighted total to shortlist candidates, then validate with a pilot.

- A lower score can mean specialization, not weakness.

- Security and compliance scores reflect controllability and governance fit, because certifications are often not publicly stated.

- Actual outcomes vary with assembly size, team skills, templates, and process maturity.

Which LLM Gateway & Model Routing Platform Is Right for You?

Solo / Freelancer

For independent developers, a tool that is easy to set up and costs nothing to start is the obvious choice. You want a gateway that handles the tedious work of API reformatting and gives you a clear view of your spend without requiring complex infrastructure management.

SMB

Small businesses should prioritize platforms that provide high-speed observability and cost-saving features like caching. As a small team, you need a managed solution that keeps your applications running reliably without needing a dedicated DevOps engineer to manage the gateway.

Mid-Market

Mid-market companies need a balance of governance and flexibility. You should look for platforms that offer virtual API keys and team-level budget controls so you can scale your AI initiatives across multiple departments while keeping a firm grip on total expenditure.

Enterprise

At the enterprise level, security and compliance are the non-negotiables. You require a platform that can integrate with your existing single sign-on system, provide an immutable audit trail of every request, and potentially run within your own private cloud or VPC to ensure data sovereignty.

Budget vs Premium

The budget choice often leads to open-source tools where you pay only for your underlying model usage. Premium services, while adding a platform fee, provide the technical support, uptime guarantees, and advanced security guardrails that are essential for mission-critical deployments.

Feature Depth vs Ease of Use

If you have a highly technical team that wants to build custom logic into the gateway, choose a platform with a rich plugin ecosystem. If you just want to get your application to production as fast as possible, a one-line integration managed service is far more valuable.

Integrations & Scalability

Consider where your data and applications currently live. If you are deeply committed to a specific cloud provider, their native gateway will offer the tightest integration. If you are building a cloud-agnostic platform, look for a neutral gateway that treats every provider equally.

Security & Compliance Needs

Evaluate the sensitivity of the data your LLMs will process. If you are in a highly regulated industry, look for gateways that offer PII masking and local data processing. Ensure the platform aligns with your corporate policies for data retention and encryption.

Frequently Asked Questions (FAQs)

1. Does using an LLM gateway add significant latency?

Modern gateways are designed for extreme performance, typically adding only sub-millisecond or very low millisecond overhead. In many cases, the use of semantic caching can actually make the overall response time much faster for the end-user.

2. Can I switch from OpenAI to Anthropic without changing my code?

Yes, that is a primary function of these gateways. They provide an OpenAI-compatible endpoint that automatically translates your requests into the correct format for whatever model you choose to route to.

3. How do gateways help in controlling AI costs?

Gateways allow you to set hard spend limits on individual API keys, implement aggressive caching to avoid redundant calls, and use routing logic to send simple tasks to cheaper, smaller models.

4. What is the difference between an AI gateway and a traditional API gateway?

While both handle routing and security, an AI gateway is specialized for LLMs. It understands concepts like tokens, prompt injections, and semantic caching, which a standard API gateway treats as generic text data.

5. Are these platforms safe for sensitive data?

Many platforms offer features like PII scrubbing and Zero Data Retention modes. For maximum security, some gateways can be deployed within your own private infrastructure so that data never leaves your controlled environment.

6. Do I need to manage multiple API keys with a gateway?

No, you typically only manage one set of credentials for the gateway itself. The gateway securely stores and manages all the individual provider keys, simplifying your backend configuration.

7. What happens if a model provider goes down?

Top-tier gateways feature automatic failover. If the primary model or provider returns an error, the gateway instantly reroutes the request to a pre-configured backup model, maintaining your application’s uptime.

8. Can I use a gateway for self-hosted models?

Yes, most gateways are designed to be provider-agnostic. You can connect your own models running on Kubernetes or local servers alongside commercial APIs, managing them all from a single dashboard.

9. How does semantic caching work?

Unlike traditional caching which looks for an exact text match, semantic caching uses embeddings to find requests with the same meaning. This allows the gateway to return a cached response even if the wording of the prompt is slightly different.

10. Do these tools support the latest model features like tool-calling?

Yes, leading gateways stay updated with the latest API features from major providers, ensuring that advanced capabilities like function calling and vision support are passed through to your application.

Conclusion

Implementing an LLM gateway is a transformative step for any organization aiming to transition from AI experimentation to a robust, production-ready architecture. By centralizing the management of multiple model providers, these platforms solve the most pressing challenges of modern AI engineering: unpredictable costs, fragmented security, and the risk of vendor lock-in. The ability to switch models with a simple configuration change, combined with real-time observability and governance, provides the technical agility necessary to keep pace with the rapid evolution of the industry. As the landscape continues to mature, the gateway layer will become the primary control plane for ensuring that AI interactions remain safe, performant, and economically viable at scale.