Introduction

AI inference serving platforms represent the critical “last mile” of the machine learning lifecycle, where trained models are transitioned into live production environments to process real-time data. Unlike the training phase, which focuses on high-throughput data processing to optimize model weights, inference platforms are engineered for low-latency execution and high availability. These systems manage the complexities of model versioning, request routing, and hardware abstraction, ensuring that a neural network can respond to millions of user queries with millisecond precision. In modern enterprise architectures, the ability to serve models efficiently is as vital as the accuracy of the models themselves, directly impacting the user experience and operational costs.

The strategic necessity of these platforms has surged with the rise of Large Language Models (LLMs) and generative AI, which require specialized memory management and massive compute resources. Organizations now face the challenge of deploying models across heterogeneous hardware—ranging from local edge devices to global cloud clusters—while maintaining strict security and compliance standards. A robust serving platform eliminates the “black box” nature of deployments by providing observability into model performance, detecting data drift, and enabling seamless updates without downtime. For decision-makers, selecting the right platform involves balancing the need for developer velocity with the long-term requirements of infrastructure stability and cost-predictability in a rapidly scaling AI economy.

Best for: Machine Learning Engineers, MLOps teams, and enterprise architects who need to deploy, scale, and monitor AI models across diverse cloud or on-premises environments with high reliability.

Not ideal for: Data scientists in the early exploratory phase who only require local experimentation, or simple applications where basic Python scripts can handle the infrequent, low-volume prediction requests without formal infrastructure.

Key Trends in AI Inference Serving Platforms

The most significant trend is the shift toward specialized LLM serving engines that utilize techniques like paged attention and continuous batching to maximize GPU utilization. Platforms are moving away from general-purpose web serving toward AI-native runtimes that understand the specific memory and compute patterns of transformer architectures. We are also seeing a massive push for “serverless inference,” where developers can deploy models without managing underlying virtual machines, paying only for the exact milliseconds of compute used during a prediction.

Another major trend is the integration of “model-as-a-service” (MaaS) hubs, where pre-optimized foundation models can be deployed with a single click. Security is also becoming a core feature, with encrypted inference and “confidential computing” protecting sensitive data even while it is being processed by the model. Finally, there is an increasing focus on edge-to-cloud continuity, allowing models to be trained in the cloud but served seamlessly on local hardware or mobile devices to reduce latency and data transmission costs.

How We Selected These Tools

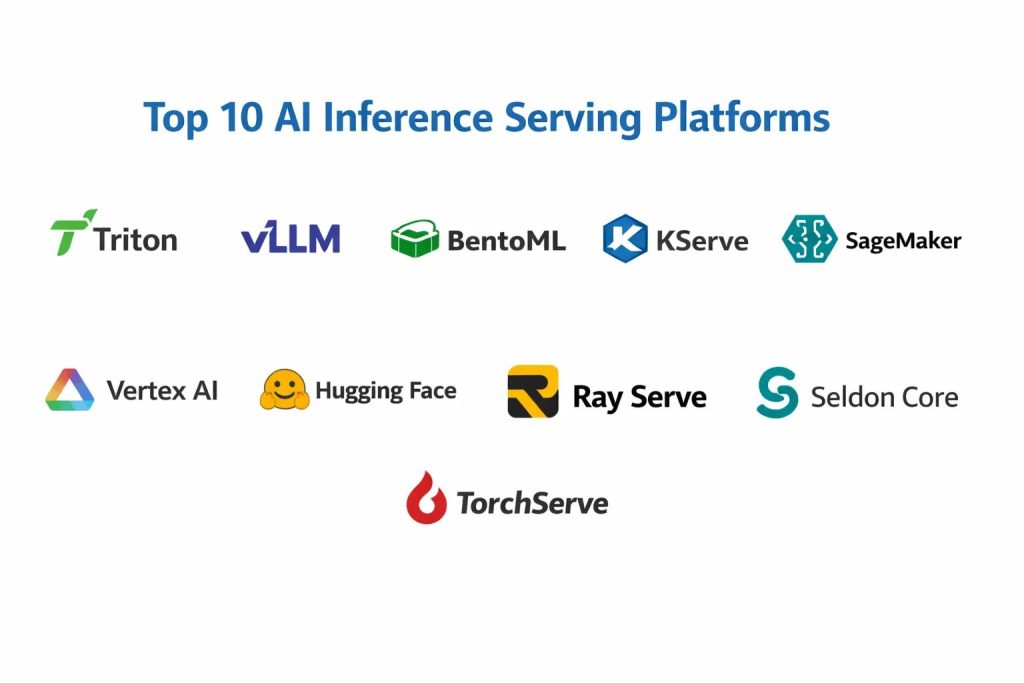

The selection of these top 10 platforms followed a rigorous evaluation of technical performance and industry adoption. We prioritized tools that offer high-performance runtimes capable of handling modern architectures like LLMs and multi-modal models. Each platform was assessed on its ability to provide “production-grade” features, including automated scaling, robust monitoring, and built-in model versioning. We looked for solutions that support a wide range of frameworks—such as PyTorch, TensorFlow, and ONNX—to ensure flexibility for diverse engineering teams.

Operational stability was a non-negotiable criterion, specifically how these platforms handle peak traffic and hardware failures. We also analyzed the ease of integration into existing CI/CD pipelines and the quality of the developer experience, from CLI tools to API documentation. The cost-to-performance ratio was heavily weighted, especially for platforms offering advanced optimization techniques that reduce GPU spend. Finally, we considered the strength of the supporting community or the level of enterprise support provided by the vendor to ensure long-term viability.

1. NVIDIA Triton Inference Server

NVIDIA Triton is a high-performance, open-source inference server designed to simplify the deployment of AI models from any framework on any GPU or CPU-based infrastructure. It excels in enterprise environments where diverse models—ranging from computer vision to large language models—must be served efficiently from a single, unified platform.

Key Features

Triton supports an extensive range of backends, including TensorRT, PyTorch, and TensorFlow, allowing for framework-agnostic serving. It features dynamic batching, which groups multiple client requests together to maximize throughput without sacrificing latency. The platform also supports model ensembling, enabling users to create complex pipelines where the output of one model serves as the input for another. Multi-GPU and multi-node support ensure that even the largest models can be scaled across massive clusters. Additionally, it provides real-time metrics for monitoring GPU utilization and request latency.

Pros

It offers industry-leading performance on NVIDIA hardware through deep hardware-software optimization. Its ability to serve multiple models from different frameworks on a single GPU significantly reduces infrastructure costs.

Cons

The configuration process can be complex, especially when setting up optimized model repositories. It primarily targets technical teams who have a deep understanding of hardware acceleration.

Platforms and Deployment

Windows, Linux, and Kubernetes. It is commonly deployed via Docker containers in cloud or on-premises environments.

Security and Compliance

Supports secure communication via SSL/TLS and integrates with enterprise-grade identity management systems for access control.

Integrations and Ecosystem

Integrates deeply with Kubernetes via KServe and is a core component of most major cloud AI platforms, including AWS and Google Cloud.

Support and Community

Maintained by NVIDIA with extensive documentation and a massive professional user base in the global tech community.

2. vLLM

vLLM is a fast and easy-to-use library specifically built for high-throughput LLM inference and serving. It gained fame for its “PagedAttention” algorithm, which manages KV cache memory with high efficiency, virtually eliminating memory waste and allowing for significantly higher concurrency than traditional serving methods.

Key Features

The standout feature is PagedAttention, which allows the engine to handle multiple requests by dynamically allocating memory in blocks, similar to virtual memory in operating systems. It provides an OpenAI-compatible API, making it a drop-in replacement for teams moving away from proprietary services. The platform supports continuous batching, which processes incoming requests as soon as they arrive rather than waiting for a full batch to form. It also includes native support for advanced quantization techniques like AWQ and FP8 to reduce memory footprint.

Pros

It delivers significantly higher throughput for LLMs compared to general-purpose servers. Its simple Python-based interface makes it highly accessible for rapid deployment and experimentation.

Cons

It is exclusively focused on Large Language Models, meaning it cannot serve other AI model types like image classifiers or recommendation engines. It is still a rapidly evolving tool, which may lead to frequent breaking changes.

Platforms and Deployment

Linux. It is typically deployed as a containerized service within a Kubernetes cluster.

Security and Compliance

Standard API security protocols can be implemented via proxy layers; however, it lacks built-in enterprise-grade compliance features found in managed services.

Integrations and Ecosystem

Widely used within the open-source community and integrates seamlessly with Ray Serve and BentoML for scaling.

Support and Community

A very active open-source community on GitHub with frequent updates and a growing ecosystem of third-party tutorials.

3. BentoML

BentoML is a Python-first framework designed to streamline the process of building, packaging, and deploying machine learning models into production. It focuses on the “Bento” format, which bundles model files, code dependencies, and API configurations into a single, deployable unit.

Key Features

The platform allows developers to define complex inference logic using standard Python, which is then automatically optimized for high-performance serving. It features an “Adaptive Batching” system that balances throughput and latency based on real-time traffic patterns. BentoML also provides a centralized model store for managing versions and metadata. It supports distributed deployments, allowing different parts of an inference pipeline to scale independently. The built-in dashboard provides a visual interface for monitoring service health and performance.

Pros

The developer experience is excellent, offering a very smooth transition from local training to a production-ready API. It is highly flexible, supporting nearly every ML framework and deployment target.

Cons

Managing very large-scale distributed deployments can become complex without the use of their proprietary cloud offering. There is a slight performance overhead compared to lower-level C++ servers like Triton.

Platforms and Deployment

Windows, macOS, and Linux. It can deploy to Kubernetes, AWS Lambda, or any cloud container service.

Security and Compliance

Provides standard API security and integrates with enterprise-level container security scanners.

Integrations and Ecosystem

Strong integrations with the entire MLOps stack, including MLflow, Prometheus, and various data engineering tools.

Support and Community

Strong community presence with professional support available through their enterprise cloud platform.

4. KServe

KServe, originally known as KFServing, is a Kubernetes-native platform for serving machine learning models. It provides a highly standardized way to deploy models on Kubernetes using a “Serverless” architecture that can scale to zero when no traffic is detected.

Key Features

KServe uses a declarative API to define inference services, handling the underlying complexities of networking, scaling, and health checks. It supports advanced deployment strategies like canary rollouts and blue-green deployments out of the box. The platform provides built-in support for model explainability, drift detection, and outlier detection. It can serve models using several runtimes, including Triton, TorchServe, and TensorFlow Serving. Its “Scale-to-Zero” capability is a major cost-saver for intermittent workloads.

Pros

It provides the most robust and standardized way to manage model serving within a Kubernetes ecosystem. The built-in advanced MLOps features like explainability are rare in other platforms.

Cons

It requires a functioning Kubernetes cluster, which carries a high operational overhead and requires specialized DevOps knowledge. The initial setup can be quite daunting for smaller teams.

Platforms and Deployment

Kubernetes only. It is cloud-agnostic and can run on any major cloud provider’s managed Kubernetes service.

Security and Compliance

Inherits the robust security features of Kubernetes and Istio, including mutual TLS and fine-grained role-based access control.

Integrations and Ecosystem

Part of the Kubeflow project, it integrates perfectly with the wider Kubernetes and Cloud Native Computing Foundation (CNCF) ecosystem.

Support and Community

Large open-source community with major contributions from companies like Google, IBM, and NVIDIA.

5. Amazon SageMaker Inference

Amazon SageMaker Inference is a fully managed service that provides a range of options for deploying machine learning models on AWS. It removes the need for managing infrastructure while offering deep integration with the broader Amazon ecosystem.

Key Features

The service offers multiple deployment modes, including real-time endpoints for low-latency needs and asynchronous inference for large payloads. It features a “Multi-Model Endpoint” capability that allows multiple models to share a single hosting instance to save costs. The “Inference Recommender” helps users pick the best instance type for their specific model performance goals. SageMaker also provides built-in model monitoring to track data quality and prediction accuracy in real-time. It supports serverless inference for developers who want to pay only for actual usage.

Pros

The seamless integration with AWS services like S3 and CloudWatch makes it the most convenient choice for organizations already on the AWS platform. It offers industry-leading security and compliance certifications.

Cons

Costs can escalate quickly if not monitored closely, especially with high-performance GPU instances. The platform’s proprietary nature can lead to vendor lock-in.

Platforms and Deployment

Cloud-based via AWS. It also supports edge deployment through SageMaker Edge Manager.

Security and Compliance

Offers enterprise-grade security, including VPC integration, KMS encryption, and compliance with SOC, HIPAA, and GDPR.

Integrations and Ecosystem

Deeply integrated with the entire AWS catalog, providing a unified experience for data storage, processing, and monitoring.

Support and Community

Provides dedicated 24/7 enterprise support and extensive professional training resources.

6. Google Vertex AI Prediction

Google Vertex AI Prediction is a managed service within the Google Cloud Platform that simplifies the deployment and scaling of machine learning models. It is designed to work seamlessly with Google’s data science tools, providing a unified environment for the entire AI lifecycle.

Key Features

Vertex AI supports “AutoML” deployment for users who want the system to handle optimization, as well as custom containers for advanced users. It features an integrated “Model Garden” for accessing and deploying pre-trained foundation models like Gemini. The platform includes robust tools for versioning and experiment tracking, ensuring that every deployment is reproducible. It offers specialized hardware support for Google’s TPUs, which can be highly efficient for certain types of large-scale inference. The built-in monitoring service automatically detects when a model’s performance begins to degrade.

Pros

It provides one of the best user experiences for data scientists, with intuitive interfaces and powerful automation features. Its generative AI capabilities are among the strongest in the market.

Cons

Pricing can be complex to predict due to the various components involved. It is primarily optimized for the Google Cloud ecosystem, making multi-cloud strategies more difficult.

Platforms and Deployment

Cloud-based via Google Cloud Platform.

Security and Compliance

Features world-class security with integrated IAM roles, data encryption at rest and in transit, and various global compliance certifications.

Integrations and Ecosystem

Tightly connected to BigQuery and Google Cloud Storage, making it easy to build data-heavy AI applications.

Support and Community

Extensive documentation and enterprise support options are available, backed by Google’s deep expertise in AI research.

7. Hugging Face Inference Endpoints

Hugging Face Inference Endpoints provide a managed solution for deploying thousands of open-source models directly from the Hugging Face Hub. It is designed for developers who want the performance of a custom-managed server without the operational burden.

Key Features

The platform allows users to deploy any model from the Hugging Face ecosystem with a few clicks. It provides dedicated infrastructure, ensuring that your models are not sharing resources with other users. It supports automatic scaling, allowing the service to grow or shrink based on the volume of incoming requests. The service includes optimized runtimes for popular architectures, significantly reducing latency for NLP and vision models. Users can choose between various GPU and CPU instances across multiple cloud providers.

Pros

It is the fastest way to get a professional, production-grade endpoint for any open-source model. The integration with the Hugging Face ecosystem is unmatched.

Cons

While easy to use, it offers less control over the underlying infrastructure than self-managed Kubernetes solutions. The cost can be higher than running on raw instances for very high-volume users.

Platforms and Deployment

Cloud-based, running on AWS or Azure infrastructure managed by Hugging Face.

Security and Compliance

Supports private connections and ensures that data is not used for model training. Compliant with SOC 2 Type II standards.

Integrations and Ecosystem

Directly tied to the Hugging Face Hub, the world’s largest repository of pre-trained models and datasets.

Support and Community

Supported by the most influential community in the modern AI era, with a massive volume of shared knowledge and examples.

8. Ray Serve

Ray Serve is a scalable, framework-agnostic model serving library built on top of the Ray distributed computing framework. It is specifically designed for building complex, multi-model inference pipelines that need to scale across large clusters.

Key Features

The platform uses a Python-native API, allowing developers to write their serving logic in standard Python code. It excels at “Model Composition,” where multiple different models can be combined into a single service with complex routing logic. Ray Serve handles the heavy lifting of scaling and resource allocation, ensuring that each part of the pipeline has the compute it needs. It features a built-in proxy that manages request batching and load balancing across the cluster. The system is highly resilient, with automatic recovery from hardware and software failures.

Pros

It is the most flexible tool for building custom, distributed AI applications that go beyond simple single-model endpoints. Its ability to scale linearly with hardware makes it ideal for massive workloads.

Cons

Managing a Ray cluster introduces a significant amount of operational complexity. It may be “overkill” for teams that only need to serve a single model on a single server.

Platforms and Deployment

Linux and Kubernetes. It can run on any cloud or on-premises hardware that supports Python.

Security and Compliance

Relies on the underlying infrastructure security; however, it can be integrated with standard enterprise authentication layers.

Integrations and Ecosystem

Deeply integrated with the Ray ecosystem, including Ray Train and Ray Tune, providing a complete distributed AI platform.

Support and Community

Maintained by Anyscale with a large and growing community of developers focused on distributed systems.

9. Seldon Core

Seldon Core is an open-source platform that converts your machine learning models into production-ready REST/gRPC microservices on Kubernetes. It is built to handle the entire lifecycle of a model deployment, from initial rollout to long-term monitoring.

Key Features

The platform features a sophisticated “Inference Graph” system that allows for the creation of complex workflows involving pre-processors, models, and post-processors. It supports advanced testing methods such as multi-armed bandits, which can dynamically route traffic to the best-performing model version. Seldon Core provides native integrations for monitoring metrics like drift and outliers via Prometheus and Grafana. It can wrap models from any framework into a standard container format. The platform is designed to be highly extensible, allowing users to write custom components in several languages.

Pros

It offers the most advanced set of deployment strategies (like multi-armed bandits) for teams that want to optimize their models in real-time. It is highly stable and used by some of the largest companies in the world.

Cons

Like KServe, it requires a deep understanding of Kubernetes. Some of the more advanced management features are reserved for their commercial “Seldon Deploy” product.

Platforms and Deployment

Kubernetes. It is cloud-agnostic and can run on any infrastructure that supports Kubernetes.

Security and Compliance

Includes comprehensive security features, including role-based access control and secure service-to-service communication.

Integrations and Ecosystem

Integrates with almost every major MLOps tool, including MLflow, Kubeflow, and various cloud-native monitoring stacks.

Support and Community

Active open-source community with professional enterprise support available through Seldon Technologies.

10. TorchServe

TorchServe is a flexible and easy-to-use tool for serving PyTorch models at scale. It was co-developed by AWS and Meta to provide a specialized, high-performance runtime for the PyTorch ecosystem.

Key Features

The platform supports multi-model serving, allowing several PyTorch models to run on a single instance with independent scaling. It features a “Model Archive” format that packages all necessary files into a single .mar file for easy deployment. TorchServe includes built-in handlers for common tasks like image classification and text generation, but also allows for completely custom inference logic. It provides detailed logging and metrics that can be exported to standard monitoring tools. The platform also supports “Snapshot” serialization, allowing users to save and restore the state of the server.

Pros

It is the most natural choice for teams that are 100% committed to the PyTorch ecosystem. It provides excellent performance and is very straightforward to set up for standard use cases.

Cons

It is less versatile than Triton or BentoML if you need to serve models from other frameworks like TensorFlow. It lacks some of the more advanced “Serverless” features of cloud-native platforms.

Platforms and Deployment

Windows, macOS, and Linux. It is commonly deployed in Docker containers on cloud services like AWS.

Security and Compliance

Provides basic authentication and secure API endpoints; enterprise-level security is usually managed at the infrastructure layer.

Integrations and Ecosystem

Deeply integrated with the PyTorch ecosystem and is the default serving method for PyTorch models on Amazon SageMaker.

Support and Community

Backed by the massive PyTorch community and supported by major industry players like AWS and Meta.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. NVIDIA Triton | GPU Optimization | Win, Linux, K8s | Hybrid | Multi-Backend Support | 4.7/5 |

| 2. vLLM | LLM Throughput | Linux | Containerized | PagedAttention Algorithm | 4.9/5 |

| 3. BentoML | Python Developers | Win, Mac, Linux | Multi-Cloud | Adaptive Batching | 4.6/5 |

| 4. KServe | Kubernetes Native | Kubernetes | Cloud/On-Prem | Scale-to-Zero Capability | 4.5/5 |

| 5. Amazon SageMaker | AWS Enterprises | Cloud, Edge | Managed | Inference Recommender | 4.8/5 |

| 6. Google Vertex AI | Gen AI Workloads | Cloud | Managed | Model Garden Access | 4.7/5 |

| 7. Hugging Face | Open-Source Models | Cloud | Managed | Hub Integration | 4.8/5 |

| 8. Ray Serve | Complex Pipelines | Linux, K8s | Distributed | Python-Native Scaling | 4.4/5 |

| 9. Seldon Core | Advanced Testing | Kubernetes | Cloud/On-Prem | Multi-Armed Bandits | 4.6/5 |

| 10. TorchServe | PyTorch Users | Win, Mac, Linux | Hybrid | .mar Archive Format | 4.3/5 |

Evaluation & Scoring of AI Inference Serving Platforms

The scoring below is a comparative model intended to help shortlisting. Each criterion is scored from 1–10, then a weighted total from 0–10 is calculated using the weights listed. These are analyst estimates based on typical fit and common workflow requirements, not public ratings.

Weights:

- Core features – 25%

- Ease of use – 15%

- Integrations & ecosystem – 15%

- Security & compliance – 10%

- Performance & reliability – 10%

- Support & community – 10%

- Price / value – 15%

| Tool Name | Performance (25%) | Scalability (15%) | Ease of Use (15%) | Cost (10%) | Security (10%) | Ecosystem (15%) | Support (10%) | Weighted Total |

| 1. Triton | 10 | 9 | 5 | 8 | 9 | 10 | 9 | 8.55 |

| 2. vLLM | 10 | 8 | 8 | 9 | 6 | 8 | 7 | 8.25 |

| 3. BentoML | 8 | 9 | 10 | 7 | 8 | 9 | 8 | 8.55 |

| 4. KServe | 8 | 10 | 4 | 9 | 10 | 9 | 8 | 8.10 |

| 5. SageMaker | 9 | 10 | 7 | 5 | 10 | 10 | 10 | 8.55 |

| 6. Vertex AI | 9 | 10 | 8 | 5 | 10 | 10 | 10 | 8.70 |

| 7. Hugging Face | 8 | 9 | 10 | 6 | 9 | 10 | 8 | 8.50 |

| 8. Ray Serve | 9 | 10 | 6 | 7 | 7 | 8 | 8 | 8.00 |

| 9. Seldon Core | 8 | 9 | 5 | 8 | 9 | 9 | 8 | 7.90 |

| 10. TorchServe | 8 | 8 | 7 | 8 | 7 | 8 | 8 | 7.65 |

How to interpret the scores:

- Use the weighted total to shortlist candidates, then validate with a pilot.

- A lower score can mean specialization, not weakness.

- Security and compliance scores reflect controllability and governance fit, because certifications are often not publicly stated.

- Actual outcomes vary with assembly size, team skills, templates, and process maturity.

Which AI Inference Serving Platform Is Right for You?

Solo / Freelancer

For individual developers, ease of use and low upfront costs are paramount. Hugging Face Inference Endpoints or BentoML offer the quickest paths to getting a model live without needing to manage complex server infrastructure.

SMB

Small businesses should prioritize managed services like SageMaker or Vertex AI if they already use those clouds, as the reduced operational burden often outweighs the slightly higher instance costs. Alternatively, BentoML is excellent for teams that want more control with minimal complexity.

Mid-Market

Companies in this range often benefit from open-source tools like Triton or Ray Serve, which allow them to optimize their hardware usage as their traffic grows. These tools provide the flexibility to move between different cloud providers as pricing or requirements change.

Enterprise

Large organizations require the governance and security provided by Kubernetes-native platforms like KServe or Seldon Core. These tools ensure that AI deployments follow the same rigorous standards as the rest of the company’s software infrastructure.

Budget vs Premium

The budget choice is almost always an open-source tool like vLLM or Triton running on spot instances. Premium managed services offer massive time savings and built-in “peace of mind” but come with higher long-term operational costs.

Feature Depth vs Ease of Use

Ray Serve and Seldon Core offer the most depth for complex pipelines but require significant expertise. In contrast, TorchServe and Hugging Face provide a more streamlined experience for teams that just want to get a standard model running quickly.

Integrations & Scalability

If your primary goal is scaling across thousands of nodes, Ray Serve and KServe are the technical leaders. For teams that need tight integration with data warehouses and training pipelines, Vertex AI and SageMaker are the clear winners.

Security & Compliance Needs

Enterprises with strict regulatory requirements should stick to the managed services of the major cloud providers (AWS, Google, Azure), as they provide the most comprehensive compliance documentation and security controls.

Frequently Asked Questions (FAQs)

1. What is the main difference between training and inference?

Training is the process of teaching a model using large datasets, which is compute-intensive and slow. Inference is the process of using that trained model to make predictions on new data, which must be extremely fast and efficient.

2. Why can’t I just use a standard web server like Flask or FastAPI for AI?

While you can, standard web servers aren’t designed for the heavy memory and compute needs of AI models. Specialized inference servers provide features like request batching, GPU management, and model versioning that general web servers lack.

3. What is “Dynamic Batching”?

Dynamic batching is a technique where the server waits for a few milliseconds to group multiple incoming requests together. Processing these as a single batch on the GPU is much more efficient than processing them one by one.

4. Does inference always require a GPU?

No, many simpler models or highly optimized small models can run efficiently on CPUs. However, for Large Language Models and complex computer vision tasks, GPUs or specialized AI accelerators (like TPUs) are usually necessary for acceptable speed.

5. What does “Scale-to-Zero” mean?

This is a serverless feature where the platform completely shuts down the compute resources when no requests are being made. This saves money by ensuring you only pay for the time the model is actually processing data.

6. What is an “Inference Graph”?

An inference graph is a way to chain multiple models and data processing steps together. For example, a graph might include a step to translate text, a model to analyze its sentiment, and a final step to format the results for a database.

7. How do I choose between vLLM and Triton for LLMs?

If you only need to serve LLMs and want the absolute highest throughput, vLLM is often the better choice. If you need to serve a variety of different model types or require deep enterprise management features, Triton is the industry standard.

8. Is “Quantization” necessary for inference?

It is highly recommended for large models. Quantization reduces the precision of the model’s numbers (e.g., from 32-bit to 8-bit), which makes the model much smaller and faster while usually only losing a tiny amount of accuracy.

9. Can I serve models on my own local servers?

Yes, open-source tools like Triton, BentoML, and Seldon Core are designed to be run anywhere, including your own data center, which can be much more cost-effective for high-volume, steady workloads.

10. What is “Model Drift”?

Model drift happens when the real-world data starts to look different from the data the model was trained on, causing the predictions to become less accurate. Many inference platforms include tools to monitor and alert you when this happens.

Conclusion

Navigating the landscape of AI inference serving platforms is a fundamental challenge for modern engineering teams looking to bridge the gap between research and reality. The transition from a local prototype to a production-grade service demands a platform that can handle the unique stresses of AI workloads—from memory-intensive LLM architectures to the need for millisecond-latency responses. Whether you opt for the turnkey simplicity of a managed cloud service or the granular control of an open-source, Kubernetes-native solution, the priority remains the same: building a system that is as resilient as it is performant. By selecting a platform that aligns with your team’s technical maturity and scaling requirements, you ensure that your AI initiatives remain sustainable, secure, and capable of delivering real-world value at scale. The right infrastructure doesn’t just serve models; it empowers your entire organization to move at the speed of innovation without compromising on stability.