Introduction

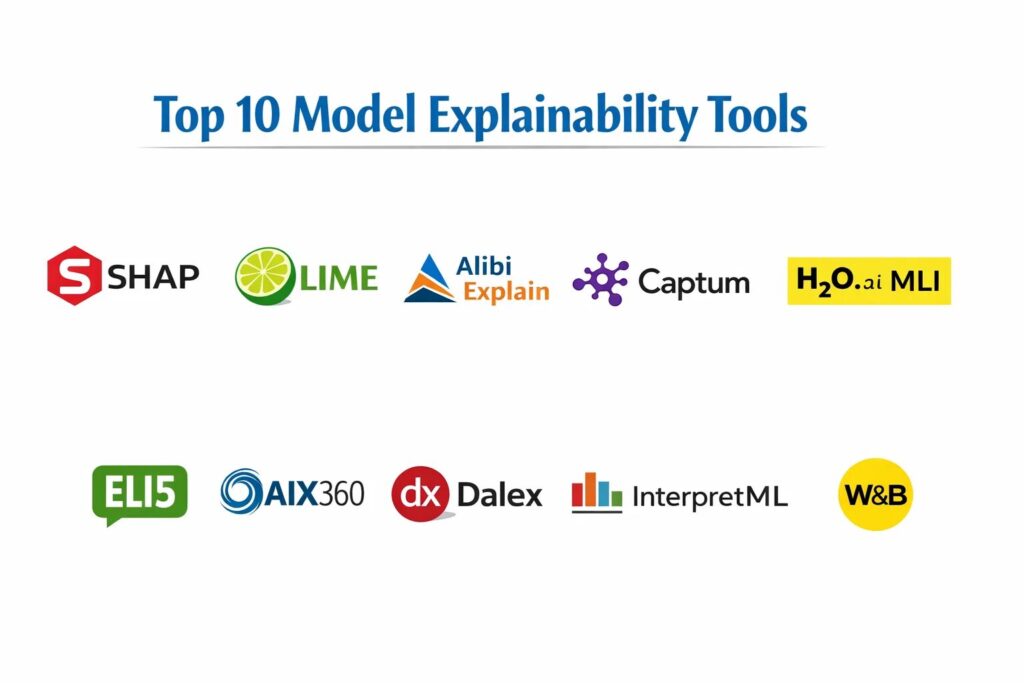

Model explainability tools have emerged as a critical component of the modern artificial intelligence lifecycle, providing the necessary transparency to move from “black-box” systems to accountable, interpretable models. In an era where machine learning influences everything from credit approvals to medical diagnoses, the ability to explain why a model made a specific prediction is no longer a luxury—it is a regulatory and ethical requirement. These tools allow data scientists and stakeholders to probe the internal logic of complex algorithms, identifying feature importance, local decision boundaries, and potential biases that could lead to systemic failures. By translating high-dimensional mathematical transformations into human-understandable insights, explainability frameworks build the trust required for enterprise-wide AI adoption.

The shift toward “Responsible AI” has driven the development of diverse methodologies for model interpretation, ranging from global feature importance to local instance explanations. Organizations are increasingly facing pressure from frameworks like the EU AI Act and GDPR, which emphasize the “right to explanation” for individuals impacted by automated decisions. Consequently, explainability is now integrated directly into the MLOps pipeline, serving as a diagnostic tool for debugging, a validation step for compliance, and a communication bridge for non-technical leadership. When evaluating these tools, organizations must consider the compatibility with different model architectures, the computational overhead of generating explanations, the robustness of the underlying mathematical techniques, and the clarity of the resulting visualizations.

Best for: Machine learning engineers, data scientists, compliance officers, and AI auditors who need to validate model behavior, ensure fairness, and communicate algorithmic logic to stakeholders.

Not ideal for: Simple linear regressions where coefficients are already transparent, or organizations using basic heuristic-based rules engines that do not involve complex automated learning.

Key Trends in Model Explainability Tools

The move toward “Post-hoc” explainability has allowed teams to apply interpretation techniques to almost any model architecture without sacrificing predictive performance. We are seeing a significant rise in “Shapley-value” based approaches, derived from game theory, which provide a mathematically consistent way to distribute credit among input features. Another dominant trend is the rise of “Counterfactual Explanations,” which tell a user exactly what would need to change in their data to receive a different outcome from the model. This provides actionable feedback rather than just static observations of past performance.

Real-time explainability is also becoming a requirement for high-frequency applications like fraud detection, where the explanation must be generated as quickly as the prediction itself. There is an increasing focus on “Global-to-Local” consistency, ensuring that the high-level trends identified across the whole dataset align with the specific decisions made for individual users. Furthermore, explainability tools are now being unified with bias detection and fairness monitoring platforms, creating a holistic “AI Observability” layer. As large language models become ubiquitous, specialized “Attention Mapping” and “Prompt Influence” tools are also emerging to help users understand how generative systems arrive at specific textual outputs.

How We Selected These Tools

Our selection process involved a comprehensive review of both open-source frameworks and enterprise platforms that prioritize algorithmic transparency. We prioritized tools that are “model-agnostic,” meaning they can be applied to a wide range of algorithms from traditional tree-based models to deep neural networks. A major criterion was the mathematical rigor of the explanation methods, favoring those based on established theories like SHAP or LIME that have been peer-reviewed and validated in high-stakes environments. We looked for tools that provide a balance of global insights (how the model works overall) and local explanations (why this specific person was rejected).

Scalability was a critical factor; we selected tools that can handle massive datasets and complex, high-dimensional features without crippling the production environment. We scrutinized the quality of the visualization libraries, as the ultimate goal of explainability is human comprehension. Tools that offer interactive dashboards or natural language summaries of model behavior were given higher scores. Security and data privacy were also considered, particularly for tools that require access to the training data to generate baseline comparisons. Finally, we assessed the level of community support and documentation, which is vital for teams implementing these advanced mathematical concepts for the first time.

1. SHAP (SHapley Additive exPlanations)

SHAP is a game-theoretic approach to explaining the output of any machine learning model. It connects optimal credit allocation with local explanations using the classic Shapley values from game theory and their related extensions. It is widely considered the gold standard for feature importance due to its mathematical consistency and theoretical grounding.

Key Features

The tool features a model-agnostic kernel that can explain any black-box function by perturbing inputs. It includes specialized “TreeExplainer” and “DeepExplainer” versions that are optimized for lightning-fast performance on specific architectures. The platform provides a variety of visualization tools, including summary plots for global importance and force plots for individual instances. It allows for the calculation of interaction values, showing how the relationship between two features impacts the outcome. It also supports “Dependence Plots” which reveal how the effect of a feature changes based on its value.

Pros

It provides the most mathematically sound and consistent feature importance scores in the industry. The visualizations are highly sophisticated and standard across the data science community.

Cons

Calculating exact Shapley values can be computationally expensive for models with many features. The kernel-based approach for non-tree models can be slow on large datasets.

Platforms and Deployment

Python-based library that integrates into local development environments and cloud notebooks.

Security and Compliance

As a local library, security depends on the host environment; it does not transmit data to external servers.

Integrations and Ecosystem

Deeply integrated with Scikit-learn, XGBoost, LightGBM, CatBoost, and TensorFlow.

Support and Community

Maintains a massive open-source community with extensive documentation and academic citations.

2. LIME (Local Interpretable Model-agnostic Explanations)

LIME is a pioneering framework designed to explain the predictions of any machine learning classifier by learning an interpretable model locally around the prediction. It is particularly valued for its ability to explain non-tabular data such as images and text.

Key Features

The platform features an “Image Explainer” that identifies the specific pixels or “super-pixels” that contributed most to a classification. It includes a “Text Explainer” that highlights the specific words or phrases that influenced a model’s sentiment analysis. The system works by perturbing a single data point and observing how the predictions change. It builds a simple, surrogate linear model that approximates the complex model’s behavior in a small local neighborhood. This makes it highly effective for identifying why a specific outlier prediction occurred.

Pros

It is extremely versatile and can be applied to almost any type of data or model. The explanations are often more intuitive for non-technical users than abstract game-theory scores.

Cons

The explanations can sometimes be unstable, meaning small changes in the input can lead to different explanations. The “local” approximation may not always represent the global model behavior accurately.

Platforms and Deployment

Python library compatible with all major data science environments.

Security and Compliance

Operates entirely within the user’s local or virtual private cloud environment.

Integrations and Ecosystem

Works with any model that has a “predict” function, including custom-built neural networks.

Support and Community

Well-established open-source project with a high volume of community-contributed tutorials and examples.

3. Alibi Explain

Alibi Explain is an enterprise-grade Python library focused on machine learning model inspection and interpretation. It provides a suite of high-performance algorithms for both global and local explainability, including unique techniques like “Anchors” and “Prototypes.”

Key Features

The tool features “Anchor” explanations, which find the minimum set of conditions that guarantee a specific prediction with high confidence. It includes “Counterfactual” methods that suggest the smallest change needed in the input to flip the model’s output. The system offers “Integrated Gradients” for deep learning models to identify important input dimensions. It also features “ALE (Accumulated Local Effects)” plots, which are a faster and more reliable alternative to traditional partial dependence plots. The library is specifically designed for production-scale model monitoring.

Pros

It offers a more diverse range of explainability algorithms than SHAP or LIME alone. The “Anchors” method provides very clear, rule-based explanations that are easy for business users to follow.

Cons

The documentation can be highly technical and may require a strong background in machine learning theory. It is a heavier library compared to single-method tools.

Platforms and Deployment

Python-based; often used in conjunction with Seldon Core for production MLOps.

Security and Compliance

Adheres to standard open-source security practices and is suitable for highly regulated environments.

Integrations and Ecosystem

Part of the broader Alibi ecosystem, integrating with Alibi Detect for outlier and drift monitoring.

Support and Community

Developed and maintained by Seldon, providing a professional level of engineering and regular updates.

4. Captum

Captum is a specialized model interpretability library for PyTorch, developed by the AI research team at Meta. It provides state-of-the-art gradient-based and perturbation-based methods to understand how data features contribute to a neural network’s predictions.

Key Features

The platform features “Integrated Gradients,” which is the industry standard for attributing neural network outputs to input features. It includes “DeepLIFT,” a technique that compares the activations of a network to a reference “baseline” to identify importance. The system offers “Occlusion” and “Feature Ablation” for testing model robustness. It features specialized tools for interpreting Layer-wise Relevance Propagation (LRP). Additionally, Captum Insights provides an interactive visualization widget for Jupyter notebooks to explore attributions visually.

Pros

It is the most powerful and optimized tool for PyTorch users. The library is built for performance and can handle very deep and complex neural architectures.

Cons

It is strictly limited to the PyTorch ecosystem, making it unusable for Scikit-learn or TensorFlow models. The learning curve for gradient-based attribution is quite high.

Platforms and Deployment

Local Python library specifically for PyTorch environments.

Security and Compliance

Local execution ensures that sensitive model weights and data never leave the secure environment.

Integrations and Ecosystem

Seamlessly integrates with the PyTorch ecosystem and TorchServe for model deployment.

Support and Community

Backed by Meta’s AI team with a growing community of researchers and developers.

5. H2O.ai Driverless AI (MLI)

H2O.ai offers an automated Machine Learning (AutoML) platform that includes a dedicated Machine Learning Interpretability (MLI) module. It is designed for enterprise users who need a unified, “click-button” solution for generating regulatory-grade model explanations.

Key Features

The system features “K-LIME,” a specialized version of LIME that uses clustering to provide more stable local explanations. It includes automated “Partial Dependence Plots” (PDP) and “Individual Conditional Expectation” (ICE) plots. The platform generates “Decision Tree Surrogates” to approximate the logic of complex models in a transparent format. It offers “Disparate Impact” analysis to check for bias across protected groups. It also features “Reason Codes” that can be automatically generated for every prediction for use in customer-facing applications.

Pros

It provides an extremely high level of automation, making it accessible to business analysts and non-coding stakeholders. The “Reason Codes” are specifically designed for regulatory compliance in finance.

Cons

It is a proprietary, paid platform, which may not fit the budget of smaller teams or individual researchers. Users are locked into the H2O.ai ecosystem for the full feature set.

Platforms and Deployment

Cloud-based SaaS or on-premises deployment via Docker or RPM.

Security and Compliance

Includes robust enterprise security features and is widely used in the highly regulated banking and insurance sectors.

Integrations and Ecosystem

Integrates with various data sources and cloud providers including AWS, Azure, and Google Cloud.

Support and Community

Offers professional enterprise support, dedicated training, and a large global user community.

6. ELI5 (Explain Like I’m 5)

ELI5 is a Python library focused on debugging machine learning classifiers and explaining their predictions in a simple, readable way. It provides a unified interface for many existing ML frameworks, making it a versatile tool for day-to-day model inspection.

Key Features

The tool features a “Show Weights” function that displays global feature importance for a wide variety of models. It includes a “Show Prediction” function that highlights which features contributed most to a specific instance. The library supports the “Permutation Importance” method, which is a robust way to measure feature importance by shuffling data. It features specialized support for explaining text classifiers by highlighting tokens. It also provides a way to inspect the internal states of tree-based models and linear models.

Pros

The interface is remarkably simple and follows the “Explain Like I’m 5” philosophy. It supports a very broad range of models, including those from Scikit-learn, XGBoost, and LightGBM.

Cons

The visualization options are limited compared to SHAP. It lacks the advanced gradient-based methods required for deep learning interpretability.

Platforms and Deployment

Local Python library.

Security and Compliance

Executes locally with no external data transmission.

Integrations and Ecosystem

Excellent compatibility with Scikit-learn, Keras, and various gradient-boosting frameworks.

Support and Community

A popular open-source project with a wealth of community tutorials and a focus on ease of use.

7. IBM AI Explainability 360

IBM AI Explainability 360 (AIX360) is an open-source toolkit that brings together a diverse collection of explainability algorithms under one roof. It is part of IBM’s broader effort to provide a comprehensive suite for “Trustworthy AI.”

Key Features

The platform features “Boolean Decision Rules” which provide “if-then” explanations that are easy for humans to understand. It includes “Contrastive Explanations” that explain a prediction by highlighting what features were absent. The system offers “Prototypes and Criticisms” to show which data points are most representative of a class. It features “Generalized Additive Models” (GAMs) for inherently interpretable modeling. It also provides a “Taxonomy of Explainability” to help users choose the right algorithm for their specific use case.

Pros

It provides a very holistic approach to explainability, covering more use cases than almost any other open-source tool. The educational resources and tutorials provided by IBM are world-class.

Cons

The library is quite large and can be complex to navigate for beginners. Some of the more advanced algorithms require significant computational resources.

Platforms and Deployment

Python-based library available on GitHub.

Security and Compliance

Designed with enterprise compliance in mind, fitting into the “AI Governance” frameworks of large corporations.

Integrations and Ecosystem

Integrates with IBM Watson OpenScale and other IBM Cloud Pak for Data services.

Support and Community

Maintained by IBM Research with active community participation and extensive academic documentation.

8. Dalex

Dalex (Descriptive mAchine Learning Explanations) is a framework designed to provide a “wrapper” around any machine learning model to facilitate exploration and explanation. It is widely used by both R and Python communities for comparative model analysis.

Key Features

The platform features “Model Studio,” an automated tool that generates an interactive dashboard for model exploration. It includes “Variable Importance” and “Variable Response” plots that are consistent across different model types. The system offers “Break-Down” plots to show how individual features contribute to a specific prediction. It features “Ceteris Paribus” plots to visualize how a prediction would change if one variable were modified while others stayed constant. It also provides tools for “Model Fairness” auditing.

Pros

The interactive dashboards are among the best in the industry for exploring model behavior visually. It allows for the easy comparison of multiple different models side-by-side.

Cons

The Python version is a port from the original R library, and some features may lag slightly behind. It can be memory-intensive when generating large interactive reports.

Platforms and Deployment

Available for both Python and R.

Security and Compliance

Standard local execution with data privacy maintained through on-premise or private cloud hosting.

Integrations and Ecosystem

Compatible with any model that provides a standard prediction interface.

Support and Community

Has a dedicated community, particularly strong in Europe and within the R programming world.

9. InterpretML

InterpretML is an open-source package from Microsoft that incorporates state-of-the-art machine learning interpretability techniques. It features both “Glassbox” models (inherently interpretable) and “Blackbox” explainers.

Key Features

The platform features the “Explainable Boosting Machine” (EBM), a glassbox model that is as accurate as gradient-boosted trees but fully interpretable. It includes a unified API for SHAP, LIME, and Linear explanations. The system offers “Global Explanations” to see the top features for the entire model. It features an interactive visualization dashboard that works directly in Jupyter notebooks. It also provides “Sensitivity Analysis” to see how model predictions change under different data distributions.

Pros

The “Explainable Boosting Machine” is a standout feature, providing a rare combination of high accuracy and total transparency. The unified API makes it very easy to switch between different explainer types.

Cons

As a relatively newer project from Microsoft, the community size is smaller than SHAP or LIME. The focus is primarily on tabular data rather than images or audio.

Platforms and Deployment

Python-based library.

Security and Compliance

Maintains high standards for data integrity and is suitable for enterprise-level compliance workflows.

Integrations and Ecosystem

Developed by Microsoft and integrates well with Azure Machine Learning services.

Support and Community

Maintained by Microsoft Research with clear documentation and a professional development roadmap.

10. Weights & Biases (W&B)

Weights & Biases is a leading MLOps platform that includes powerful tools for model explainability as part of its experiment tracking and visualization suite. It is favored by deep learning teams for its collaborative features and real-time monitoring.

Key Features

The platform features “W&B Tables,” which allow for the interactive exploration of model predictions and attributions. It includes integrated support for SHAP and Captum, allowing these explanations to be logged and visualized in the central dashboard. The system offers “Model Comparison” views to see how different architectures explain the same data point. It features automated “Gradient Logging” to track how neural network weights are changing. It also provides collaborative “Reports” where teams can document and share their explainability findings.

Pros

It is the best tool for teams working together, as explanations can be shared and discussed in a central web interface. The integration of explainability with experiment tracking is a major workflow advantage.

Cons

It is a proprietary platform with a “freemium” model, which may be a barrier for some open-source projects. It requires data to be logged to the W&B servers (unless using the enterprise local version).

Platforms and Deployment

Cloud-based SaaS with an option for local/private cloud deployment for enterprise users.

Security and Compliance

SOC 2 Type II compliant with robust data encryption and user access controls.

Integrations and Ecosystem

Integrates with nearly every major ML framework including PyTorch, TensorFlow, Keras, and Scikit-learn.

Support and Community

Offers a massive community, a professional support team, and extensive educational content on deep learning best practices.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. SHAP | Mathematical Rigor | Python | Local / Cloud | Shapley Values | 4.9/5 |

| 2. LIME | Non-Tabular Data | Python | Local / Cloud | Local Surrogates | 4.7/5 |

| 3. Alibi Explain | Anchors & Counterfactuals | Python | Local / Cloud | Anchor Explanations | 4.6/5 |

| 4. Captum | Deep Learning / PyTorch | Python (PyTorch) | Local / Cloud | Integrated Gradients | 4.8/5 |

| 5. H2O.ai MLI | Regulatory Compliance | Web-Based | SaaS / On-Prem | Automated Reason Codes | 4.7/5 |

| 6. ELI5 | Simple Debugging | Python | Local / Cloud | “Show Weights” UI | 4.5/5 |

| 7. AIX360 | Holistic Toolkit | Python | Local / Cloud | Contrastive Explanations | 4.6/5 |

| 8. Dalex | Comparative Analysis | Python / R | Local / Cloud | Model Studio Dashboard | 4.5/5 |

| 9. InterpretML | Inherently Interpretable | Python | Local / Cloud | Explainable Boosting | 4.7/5 |

| 10. W&B | Collaborative MLOps | Web-Based | SaaS / Private | Integrated Explanation Log | 4.8/5 |

Evaluation & Scoring of Model Explainability Tools

The scoring below is a comparative model intended to help shortlisting. Each criterion is scored from 1–10, then a weighted total from 0–10 is calculated using the weights listed. These are analyst estimates based on typical fit and common workflow requirements, not public ratings.

Weights:

- Core features – 25%

- Ease of use – 15%

- Integrations & ecosystem – 15%

- Security & compliance – 10%

- Performance & reliability – 10%

- Support & community – 10%

- Price / value – 15%

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| 1. SHAP | 10 | 5 | 10 | 9 | 7 | 9 | 10 | 8.35 |

| 2. LIME | 8 | 8 | 9 | 9 | 9 | 8 | 9 | 8.45 |

| 3. Alibi Explain | 9 | 6 | 9 | 9 | 8 | 8 | 8 | 8.00 |

| 4. Captum | 9 | 5 | 7 | 9 | 10 | 8 | 9 | 8.00 |

| 5. H2O.ai MLI | 9 | 10 | 8 | 10 | 9 | 9 | 6 | 8.65 |

| 6. ELI5 | 7 | 10 | 9 | 9 | 10 | 7 | 9 | 8.45 |

| 7. AIX360 | 9 | 6 | 8 | 9 | 7 | 9 | 9 | 8.10 |

| 8. Dalex | 8 | 8 | 8 | 9 | 7 | 8 | 9 | 8.05 |

| 9. InterpretML | 9 | 8 | 8 | 9 | 8 | 8 | 9 | 8.45 |

| 10. W&B | 8 | 9 | 10 | 9 | 9 | 10 | 8 | 8.85 |

How to interpret the scores:

- Use the weighted total to shortlist candidates, then validate with a pilot.

- A lower score can mean specialization, not weakness.

- Security and compliance scores reflect controllability and governance fit, because certifications are often not publicly stated.

- Actual outcomes vary with assembly size, team skills, templates, and process maturity.

Which Model Explainability Tool Tool Is Right for You?

Solo / Freelancer

For independent researchers or solo founders, the priority is often speed and cost-effectiveness. Open-source libraries like ELI5 or LIME provide immediate insights without any financial overhead. You should look for tools that have the largest community following to ensure that you can find solutions to technical hurdles quickly.

SMB

Organizations with limited technical resources should prioritize tools that offer the most intuitive visualizations. A platform that provides “ready-made” charts and simple reason codes can help your team communicate the logic of your social impact models to donors and stakeholders without needing a specialized data science team.

Mid-Market

Mid-sized companies should focus on tools that balance ease of use with algorithmic rigor. As your AI initiatives grow, the ability to generate counterfactual explanations becomes a competitive advantage for customer service. Tools like InterpretML or Alibi Explain are excellent choices for this stage of growth.

Enterprise

Large, highly regulated organizations require “regulatory-grade” explainability. This means selecting a platform that offers automated compliance reporting, robust security, and dedicated support. Enterprise platforms like H2O.ai or IBM AIX360 are often the best fit for ensuring that your AI initiatives meet global legal standards.

Budget vs Premium

If your budget is zero, the open-source ecosystem (SHAP, LIME, Captum) is world-class and often more advanced than paid tools. However, premium platforms justify their cost by significantly reducing the engineering time required to build dashboards and by providing integrated bias and fairness monitoring.

Feature Depth vs Ease of Use

If your team consists of deep learning experts, tools with high feature depth like Captum are essential. However, if your goal is to enable business users to inspect model behavior, a tool that prioritizes ease of use and automated “English-language” summaries is a better investment.

Integrations & Scalability

Your explainability tool must live inside your production pipeline. If you are a PyTorch shop, Captum is the logical choice. If you are using a broad mix of tools, a model-agnostic wrapper like Dalex or SHAP ensures that your explainability strategy remains consistent as your tech stack evolves.

Security & Compliance Needs

If you handle sensitive financial or health data, local execution is a non-negotiable requirement. Ensure the tool you choose does not require sending data to a third-party server for processing. For enterprise compliance, look for tools that have been vetted by industry leaders for their mathematical robustness.

Frequently Asked Questions (FAQs)

1. What is the difference between global and local explainability?

Global explainability looks at the model as a whole to determine which features are most important across the entire dataset. Local explainability focuses on a single specific prediction, explaining exactly why the model made that choice for that particular data point.

2. Why can’t we just use simple models like decision trees?

Simple models are “inherently interpretable,” but they often lack the predictive power required for complex tasks like image recognition or natural language processing. Explainability tools allow us to use powerful, complex models while still maintaining a “right to explanation.”

3. What are Shapley values in simple terms?

In machine learning, Shapley values treat each feature as a “player” in a game and the prediction as the “payout.” The tool then calculates exactly how much each player contributed to that final payout, ensuring a fair distribution of credit.

4. How does LIME work without knowing the model’s internals?

LIME treats the model as a “black box.” It creates new, slightly different versions of the input data and sees how the model’s predictions change. By doing this many times, it can build a simple map of how the model behaves in that local area.

5. What is a counterfactual explanation?

A counterfactual explanation tells a user: “If your income had been $5,000 higher, your loan would have been approved.” It provides the minimum change needed in the input data to flip the model’s decision to a different outcome.

6. Do explainability tools work for images and text?

Yes, specialized tools like Captum and LIME can identify which pixels in an image or which words in a sentence were most important for the model’s decision. This is often visualized as a “heatmap” over the original data.

7. Is there a performance penalty for using explainability?

Generating explanations requires additional computation, which can be significant for complex models. However, this is usually done “post-hoc” after the prediction is made, so it does not necessarily slow down the model’s actual inference speed.

8. What are “anchors” in model explainability?

Anchors are a set of conditions that “anchor” a prediction. For example: “If the person’s age is over 30 and they have a job, the model will always predict ‘Approved’ regardless of other features.” They are a way to find sufficient conditions for a result.

9. Can explainability tools detect bias?

Yes, by identifying which features a model is relying on, these tools can reveal if a model is using “proxy” variables for protected groups (like using zip code as a proxy for race). Many tools now have dedicated fairness modules.

10. Which tool is best for PyTorch neural networks?

Captum is the industry-standard tool for PyTorch. It was built specifically for that ecosystem and includes highly optimized gradient-based methods that take full advantage of PyTorch’s internal architecture.

Conclusion

Model explainability is the essential bridge between algorithmic complexity and human accountability. In the current landscape where AI is deeply integrated into the fabric of society, the ability to audit, interpret, and explain automated decisions is fundamental to maintaining public trust and operational safety. Whether you leverage the mathematical consistency of SHAP, the localized intuition of LIME, or the enterprise-grade automation of H2O.ai, the goal remains the same: to ensure that AI serves as a transparent and fair partner in human decision-making. By implementing these tools today, organizations can future-proof their AI strategies against evolving global regulations and build systems that are as understandable as they are powerful.

I find that this breakdown of the Top 10 Model Explainability Tools is an indispensable resource for any professional tasked with bridging the gap between high-performance “black-box” models and human-centric accountability. From my perspective as a Machine Learning Solutions Architect, I learned that explainability is no longer just a “nice-to-have” debugging feature; it is a core regulatory requirement under frameworks like the EU AI Act. Tools like SHAP and LIME have taught me that we can provide mathematically rigorous credit allocation for every input feature, ensuring that we can explain exactly why a model made a specific prediction to both technical and non-technical stakeholders.

In my professional work, the real-world value of these tools lies in their ability to generate Counterfactual Explanations—telling a user exactly what they need to change in their data to flip a model’s decision. This blog helped me realize that the future of AI isn’t just about building the most accurate model, but about building the most interpretable one. For other organizations, adopting these tools will provide the necessary transparency to move beyond simple automation toward a truly Responsible AI lifecycle. My advice for anyone studying this list is to prioritize consistency and stability—an explanation that changes every time you run it will only erode trust, so choosing a framework with strong mathematical grounding, like Alibi Explain or Captum, is vital for long-term production success.