Introduction

Text-to-Speech (TTS) technology has evolved from robotic, monotone voice synthesis into a sophisticated field of neural linguistics that captures the essence of human emotion and cadence. These platforms utilize deep learning models—specifically Generative Adversarial Networks (GANs) and Transformers—to analyze text and predict the corresponding acoustic features with microsecond precision. For organizations, this technology is no longer a luxury for accessibility but a core strategic asset for global content distribution. By converting static text into high-fidelity audio, enterprises can instantly localize training materials, automate customer service through conversational AI, and create immersive brand identities through unique synthetic voices.

The current landscape of TTS is defined by the shift toward “zero-shot” voice cloning and real-time streaming, where the delay between text input and audio output has been reduced to sub-millisecond levels. This allows for fluid, two-way conversations between humans and AI agents. When selecting a platform, technical leads must evaluate the depth of the Application Programming Interface (API), the availability of Speech Synthesis Markup Language (SSML) for fine-grained control, and the robustness of the cloud infrastructure. Furthermore, as synthetic media becomes more prevalent, the ethical sourcing of voice data and the presence of security certifications like SOC 2 and GDPR compliance have become non-negotiable criteria for professional integration.

Best for: Developers building real-time voice agents, marketing teams creating localized video content, e-learning professionals, and enterprises requiring scalable accessibility solutions.

Not ideal for: High-stakes live performances requiring unpredictable human improvisation or creative projects where the unique, non-replicable “soul” of a specific human performance is the primary artistic goal.

Key Trends in Text-to-Speech Platforms

Real-time emotional expression is the most significant breakthrough, with models now able to inject whispers, shouts, and situational laughter into speech based on the context of the sentence. There is a massive move toward multilingual consistency, where a single cloned voice can speak dozens of languages while maintaining the same personal characteristics and accent. Automation in “prosody”—the rhythm and intonation of language—now allows AI to understand when to pause for dramatic effect or increase speed for excitement without manual tagging.

On the infrastructure side, the rise of edge-based TTS allows for voice synthesis to happen locally on devices, ensuring privacy and offline functionality for automotive and IoT applications. We are also seeing the standardization of voice-cloning ethics, with platforms implementing mandatory “proof-of-voice” checks to prevent unauthorized deepfakes. Finally, the integration of TTS with Large Language Models (LLMs) has created a “voice-first” AI ecosystem where the reasoning and the speaking happen in a tightly coupled, low-latency loop.

How We Selected These Tools

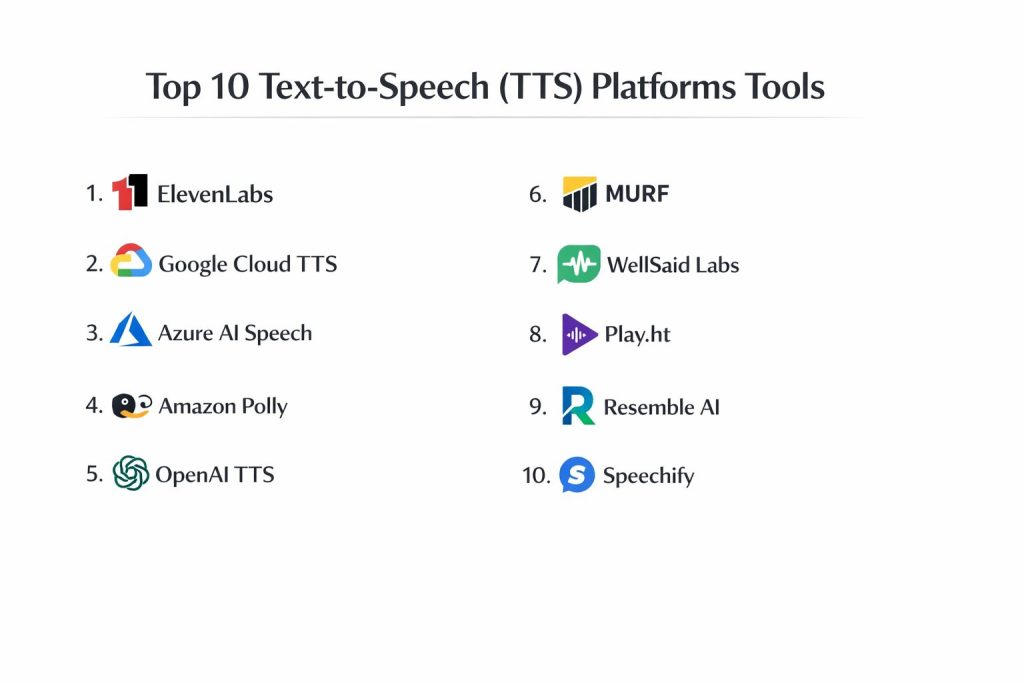

The selection of these top 10 platforms was based on a rigorous assessment of vocal naturalness, technical scalability, and enterprise-grade reliability. We prioritized tools that offer neural voice engines capable of passing the “Turing Test” for speech in professional settings. Reliability was a core metric, evaluating each provider’s uptime history and the latency of their streaming APIs, which is critical for interactive applications. We also analyzed the breadth of language support, looking for platforms that provide high-quality localized accents rather than just generic translations.

Security and data governance played a decisive role, especially for tools intended for corporate use. We scrutinized the ownership rights of the generated audio and the privacy policies regarding the data used for voice cloning. Integration flexibility was another key factor, as modern workflows require TTS to sit within complex stacks involving CMS, video editors, and automated pipelines. Finally, we balanced the list to include both developer-centric APIs and creator-friendly studios to ensure a comprehensive overview of the market.

1. ElevenLabs

ElevenLabs is widely considered the leader in hyper-realistic, emotionally nuanced speech synthesis. Its proprietary models excel at understanding context, allowing the AI to naturally adjust its tone based on the narrative flow of the text. It is the primary choice for creators and developers who need the highest possible quality for storytelling and long-form content.

Key Features

The platform offers an advanced “Speech-to-Speech” tool that allows users to transform their own voice into a different character while keeping the original emotion. It features a massive library of thousands of community-contributed and professional voices. The API is built for high-performance streaming, supporting real-time applications with minimal lag. It also includes an automated dubbing system that can translate videos into multiple languages while preserving the original speaker’s voice profile. Additionally, the “Projects” tool provides a full-scale studio environment for managing entire audiobooks or long scripts.

Pros

The level of realism and emotional depth is currently unmatched in the industry. The voice cloning process is exceptionally fast, requiring only a few seconds of audio for a high-quality result.

Cons

The pricing can become expensive for high-volume users compared to standard cloud providers. The free tier is quite limited in terms of character count and commercial rights.

Platforms and Deployment

Web-based studio and REST API. It supports cloud deployment with high-speed global delivery.

Security and Compliance

It implements strict voice cloning verification and is GDPR compliant. It includes an AI speech classifier to identify audio generated by the platform.

Integrations and Ecosystem

Offers a robust API that integrates with various creative tools and game engines. It is a favorite for users of specialized AI video tools and automated content pipelines.

Support and Community

Provides extensive documentation and an active Discord community, alongside professional support for enterprise customers.

2. Google Cloud Text-to-Speech

Google Cloud TTS leverages the power of DeepMind’s WaveNet and Neural2 models to provide a highly scalable, developer-centric service. It is designed for global applications that require consistent performance across hundreds of languages and variants.

Key Features

The service offers over 380 voices across more than 50 languages and dialects. It includes “Studio Voices,” which are high-fidelity models specifically trained for long-form narration and professional use. Users have deep control through SSML, allowing for precise adjustments to pitch, speaking rate, and volume gain. It supports real-time streaming and batch processing for large datasets. The platform also provides “Custom Neural Voice” capabilities, allowing enterprises to train a unique voice model based on their own studio recordings.

Pros

It offers the most extensive global language coverage and is backed by Google’s world-class infrastructure. The pricing is very competitive for high-volume, enterprise-level synthesis.

Cons

The interface is technical and resides within the broader Google Cloud Console, which may be intimidating for non-developers. The standard voices can sometimes sound more “functional” than “emotional.”

Platforms and Deployment

Cloud-based API with SDKs for various programming languages. It integrates directly with other Google Cloud services.

Security and Compliance

Meets the highest enterprise standards, including SOC 2, HIPAA, and GDPR. It offers detailed audit logs and role-based access control.

Integrations and Ecosystem

Deeply integrated with the Google Cloud ecosystem, including Dialogflow for building conversational bots. It is widely used in telephony and global enterprise software.

Support and Community

Backed by the massive Google Cloud support network, featuring exhaustive documentation and enterprise-level service agreements.

3. Microsoft Azure AI Speech

Azure AI Speech is a comprehensive enterprise solution that focuses on high-level customization and integration within the Microsoft ecosystem. It is renowned for its “Speaking Styles” feature, which allows voices to switch between modes like “newscast,” “customer service,” or “cheerful.”

Key Features

The platform provides a wide array of neural voices with sophisticated prosody and intonation. Its “Custom Neural Voice” tool is highly regarded for creating exclusive brand voices with high accuracy. The “Speech Studio” provides a visual interface for non-technical users to experiment with voice settings and styles. It supports on-premise deployment via containers, which is critical for industries with strict data residency requirements. The service also includes real-time translation and transcription capabilities within the same unified API.

Pros

The ability to switch between specific speaking styles makes it ideal for professional customer-facing applications. It offers the best on-premise deployment options for secure environments.

Cons

Setting up the environment within Azure can be complex and requires a good understanding of cloud architecture. The pricing structure can be difficult to predict without detailed usage monitoring.

Platforms and Deployment

Cloud-based API, web studio, and on-premise containers. It is optimized for the Microsoft Azure infrastructure.

Security and Compliance

Highly compliant with global regulations, including FedRAMP, HIPAA, and ISO standards. It provides robust tools for data privacy and governance.

Integrations and Ecosystem

Seamlessly connects with Microsoft 365, Dynamics 365, and the Power Platform. It is a top choice for corporate environments already utilizing Microsoft’s stack.

Support and Community

Offers professional enterprise support and a large library of tutorials through Microsoft Learn.

4. Amazon Polly

Amazon Polly is an AWS-native service that turns text into lifelike speech, focusing on cost-effectiveness and developer ease of use. It is a staple for high-volume applications like automated news narration and telephony.

Key Features

Polly offers both standard and neural TTS engines, allowing users to balance cost and quality. It features “Speech Marks,” which provide metadata about when specific words or sounds are spoken, making it perfect for lip-syncing in animations. The platform supports “Brand Voice” creation, where Amazon works with a company to build a completely unique neural voice. It includes a specialized “Newscaster” style for professional-grade media delivery. The service is designed for low-latency response, which is essential for interactive voice response (IVR) systems.

Pros

The pay-as-you-go pricing model is very attractive for startups and high-scale developers. It is incredibly reliable and scales effortlessly within the AWS cloud environment.

Cons

The selection of voices is smaller compared to Google or Microsoft. While high-quality, the emotional range of the voices is generally more conservative than specialized creator tools.

Platforms and Deployment

Cloud-based API within AWS. It is designed for seamless integration with serverless architectures like AWS Lambda.

Security and Compliance

Fully integrated with AWS Identity and Access Management (IAM) for secure access. It complies with major standards like GDPR and SOC.

Integrations and Ecosystem

Works perfectly with other AWS services like S3 for storage and Amazon Connect for cloud contact centers. It has a broad range of third-party integrations.

Support and Community

Provides extensive developer documentation and support through the AWS ecosystem, which is one of the largest in the world.

5. OpenAI TTS

OpenAI has introduced a streamlined TTS API that leverages their advanced generative models to produce clear, natural-sounding speech. It is designed to be the “voice” of the next generation of AI agents and interactive applications.

Key Features

The API offers six distinct, highly optimized built-in voices that cover a range of tones and personas. It is built for simplicity, requiring minimal configuration to get high-quality audio output. The platform supports real-time streaming, allowing audio to be played back as it is being generated. It is optimized for English but supports dozens of other languages with high clarity. The voices are designed to be “agent-like,” meaning they are clear and easy to understand even in complex conversational scenarios.

Pros

It is incredibly easy to implement for developers already using the OpenAI ecosystem. The voices are remarkably consistent and lack the “robotic” artifacts found in older neural models.

Cons

It lacks advanced features like voice cloning or granular SSML control. Users are limited to the predefined voices provided by the platform.

Platforms and Deployment

Cloud-based API. It is designed to be lightweight and fast for web and mobile applications.

Security and Compliance

Adheres to OpenAI’s enterprise security protocols, including SOC 2 compliance. It provides options to opt-out of data being used for model training.

Integrations and Ecosystem

Perfectly suited for integration with GPT-4 based applications and AI assistants. It is becoming the standard for the “voice-enabled” LLM stack.

Support and Community

Backed by one of the fastest-growing developer communities, with extensive forum support and clear API documentation.

6. Murf AI

Murf AI is an all-in-one “AI Voice Studio” designed for marketing teams and educators who need to create professional voiceovers without technical expertise. It focuses on the end-to-end production of audio for videos and presentations.

Key Features

The platform includes a built-in video editor that allows users to sync their AI voiceover directly with visual content. It offers a curated library of over 120 voices across 20+ languages, categorized by use case (e.g., “Explainer,” “Podcast”). Users can adjust pitch, speed, and add pauses through a simple, timeline-based interface. It features a “Voice Changer” that can turn a home-recorded audio file into a professional studio-quality AI voice. The platform also provides a collaborative workspace for teams to review and edit audio projects.

Pros

The user interface is exceptionally intuitive, making it accessible to non-technical users. The integrated video-syncing tool significantly speeds up the production process for social media content.

Cons

The character limits on the lower-tier plans can be restrictive for large projects. It is less focused on developer APIs compared to the cloud giants.

Platforms and Deployment

Web-based studio. It is primarily a cloud-hosted creative platform.

Security and Compliance

Provides standard data protection and is GDPR compliant. It offers enterprise plans with enhanced security features.

Integrations and Ecosystem

Integrates with tools like Canva and Adobe Creative Cloud through plugins. It is designed to fit into a creative marketing workflow.

Support and Community

Offers a helpful help center, video tutorials, and direct support for business and enterprise users.

7. WellSaid Labs

WellSaid Labs focuses on providing “Studio Quality” voices for corporate and enterprise narration. They pride themselves on a small, highly curated library of voices that are indistinguishable from human professional voice actors.

Key Features

The platform is built around “Avatars,” which are high-fidelity voice models designed for specific professional contexts. It features a “Pronunciation Library” where teams can define how technical terms or brand names should be spoken across all projects. The “Studio” allows for non-destructive editing, where users can regenerate specific sentences without changing the whole file. It is designed for consistency, ensuring that a brand voice sounds identical every time it is used. The API allows for automated narration within internal corporate platforms.

Pros

The quality of the voices is incredibly consistent, making it the best choice for corporate training and formal communications. Their ethical approach to voice talent sourcing is a major plus for ESG-conscious companies.

Cons

The voice library is smaller than many competitors, focusing on quality over quantity. The subscription price point is geared toward professional and enterprise users.

Platforms and Deployment

Web studio and REST API. It is a cloud-based service optimized for business workflows.

Security and Compliance

SOC 2 Type II certified and GDPR compliant. It places a heavy emphasis on data privacy and ethical AI usage.

Integrations and Ecosystem

Offers a clean API for enterprise integration. It is designed to work alongside professional e-learning tools and internal LMS systems.

Support and Community

Provides high-touch support with dedicated account managers for enterprise clients and clear onboarding resources.

8. Play.ht

Play.ht is a versatile platform that bridges the gap between individual creators and professional publishers. It is known for its massive selection of voices and its ability to “audiolize” entire websites and blogs instantly.

Key Features

The platform offers access to over 800 voices in 142 languages, pulling from its own models and several major cloud providers. It features an “Audio Player” that can be embedded into websites to provide an automated narration of articles. Its “Voice Generation” tool includes advanced controls for emotion and style. The platform supports high-fidelity voice cloning for both “Instant” and “Professional” use cases. It also provides a podcasting tool that allows users to distribute their AI-generated audio directly to platforms like Spotify.

Pros

It has one of the largest and most diverse voice libraries in the market. The web accessibility tools (embedded players) are a major advantage for digital publishers and bloggers.

Cons

The quality can vary significantly between the different voice engines available on the platform. The interface can sometimes feel cluttered due to the sheer number of options and tools.

Platforms and Deployment

Web-based studio and API. It is a cloud-native platform focused on content distribution.

Security and Compliance

Adheres to standard data privacy regulations and offers secure API access for developers.

Integrations and Ecosystem

Strong integrations with WordPress and other CMS platforms. It is widely used by digital media companies to improve site accessibility.

Support and Community

Provides a comprehensive knowledge base and active customer support channels.

9. Resemble AI

Resemble AI is a specialized platform that focuses on custom voice cloning and interactive AI experiences. It is widely used in the gaming and automotive industries to create dynamic, responsive voices that can change based on user interaction.

Key Features

The platform features “Resemble Fill,” which allows users to edit audio by simply typing new text, with the AI blending the new words seamlessly into the existing recording. It offers a “Voice-to-Voice” feature for creating high-detail emotional performances. The service includes a specialized tool for detecting and preventing deepfake audio, ensuring the security of cloned voices. Its API is built for low-latency, real-time interaction in virtual environments. It also supports “Neural Speech Style Transfer,” allowing one voice to adopt the emotions of another.

Pros

The “Fill” feature is a game-changer for editing existing audio without re-recording. It offers some of the most advanced technical tools for fine-tuning the emotional output of a cloned voice.

Cons

The platform has a steeper learning curve than simple studio tools. It is highly specialized, which may be overkill for basic narration or text-reading tasks.

Platforms and Deployment

Web studio and API. It supports cloud and edge-based deployment for specialized hardware.

Security and Compliance

Includes advanced watermarking and voice-biometric security. It is compliant with major global data standards.

Integrations and Ecosystem

Integrates deeply with game engines like Unreal and Unity. It is a top choice for developers building immersive digital humans and interactive simulations.

Support and Community

Offers technical support for developers and specialized consulting for enterprise voice-cloning projects.

10. Speechify

Speechify is the leading consumer-facing TTS platform, originally built for accessibility and individual productivity. It has since expanded into a professional studio while remaining the best tool for “speed-reading” and document narration.

Key Features

The platform is famous for its “Celebrity Voices,” allowing users to have their documents read by well-known figures. It features a powerful OCR (Optical Character Recognition) tool that can turn physical books and photos into audio. The mobile app is exceptionally polished, offering a seamless experience for listening to PDFs, emails, and articles on the go. Its “Studio” tool provides professional voiceover capabilities for creators. It also includes a browser extension that can read any webpage with a single click.

Pros

The mobile and desktop user experience is the best in the consumer category. It is an incredible tool for productivity, allowing users to “read” at much higher speeds than normal.

Cons

The subscription model is primarily geared toward individuals and can be expensive for a personal tool. The professional studio features are newer and less established than specialized production tools.

Platforms and Deployment

iOS, Android, macOS, Windows, and Browser Extension. It is a cross-platform cloud service.

Security and Compliance

Focuses on user data privacy and follows standard consumer security practices.

Integrations and Ecosystem

Integrates with Google Drive, Dropbox, and major web browsers. It is the go-to tool for students and professionals looking to optimize their information intake.

Support and Community

Offers extensive in-app support and a large community of users focused on learning and accessibility.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. ElevenLabs | High-end Realism | Web / API | Cloud | Emotional Context Engine | 4.9/5 |

| 2. Google Cloud TTS | Global Scale | API / SDK | Cloud | WaveNet / Neural2 Tech | 4.7/5 |

| 3. Azure AI Speech | Enterprise / Styles | Web / API | Hybrid | SSML / Speaking Styles | 4.6/5 |

| 4. Amazon Polly | Cost / AWS Native | API / Console | Cloud | Speech Marks for Sync | 4.5/5 |

| 5. OpenAI TTS | AI Agent Voice | API | Cloud | Minimalist / Natural | 4.8/5 |

| 6. Murf AI | Marketing Video | Web Studio | Cloud | Built-in Video Editor | 4.6/5 |

| 7. WellSaid Labs | Corporate Training | Web / API | Cloud | Ethical Voice Avatars | 4.7/5 |

| 8. Play.ht | Digital Publishing | Web / API | Cloud | Large Multi-engine Library | 4.4/5 |

| 9. Resemble AI | Interactive / Gaming | Web / API | Hybrid | Resemble Fill Editing | 4.5/5 |

| 10. Speechify | Personal Prod | Web / Mobile | Cloud | OCR / Celebrity Voices | 4.8/5 |

Evaluation & Scoring of Text-to-Speech (TTS) Platforms

The scoring below is a comparative model intended to help shortlisting. Each criterion is scored from 1–10, then a weighted total from 0–10 is calculated using the weights listed. These are analyst estimates based on typical fit and common workflow requirements, not public ratings.

Weights:

- Core features – 25%

- Ease of use – 15%

- Integrations & ecosystem – 15%

- Security & compliance – 10%

- Performance & reliability – 10%

- Support & community – 10%

- Price / value – 15%

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| 1. ElevenLabs | 10 | 8 | 8 | 8 | 9 | 9 | 7 | 8.65 |

| 2. Google Cloud | 8 | 4 | 10 | 10 | 10 | 10 | 9 | 8.35 |

| 3. Azure AI | 9 | 5 | 10 | 10 | 9 | 10 | 8 | 8.55 |

| 4. Amazon Polly | 7 | 6 | 10 | 10 | 10 | 10 | 10 | 8.60 |

| 5. OpenAI TTS | 8 | 10 | 9 | 8 | 10 | 8 | 9 | 8.85 |

| 6. Murf AI | 7 | 10 | 7 | 7 | 8 | 8 | 7 | 7.65 |

| 7. WellSaid Labs | 9 | 8 | 7 | 9 | 9 | 9 | 7 | 8.20 |

| 8. Play.ht | 8 | 9 | 8 | 7 | 8 | 7 | 8 | 7.95 |

| 9. Resemble AI | 9 | 6 | 8 | 9 | 9 | 8 | 7 | 8.05 |

| 10. Speechify | 7 | 10 | 8 | 7 | 9 | 9 | 8 | 8.15 |

How to interpret the scores:

- Use the weighted total to shortlist candidates, then validate with a pilot.

- A lower score can mean specialization, not weakness.

- Security and compliance scores reflect controllability and governance fit, because certifications are often not publicly stated.

- Actual outcomes vary with assembly size, team skills, templates, and process maturity.

Which Text-to-Speech Platform Is Right for You?

Solo / Freelancer

Independent creators should prioritize platforms that offer a high “quality-to-speed” ratio. For YouTube creators or podcasters, a tool that includes an integrated editor and a simple licensing structure allows for professional results without needing a technical team.

SMB

Small businesses often find the most value in all-in-one studios. These platforms reduce the need for external voice talent and complicated editing software, allowing marketing or HR teams to produce training and promotional content in-house with minimal overhead.

Mid-Market

Mid-sized companies need a balance of ease and scalability. Platforms that offer collaborative workspaces and shared asset libraries are essential as teams grow, ensuring that brand voice remains consistent across different departments and regions.

Enterprise

For large organizations, security and infrastructure are the primary concerns. Choosing a platform with high-level compliance (SOC 2, HIPAA) and the ability to integrate into existing cloud environments (AWS, Azure, Google) is critical for long-term operational stability.

Budget vs Premium

Users on a tight budget should look at the pay-as-you-go models offered by major cloud providers, which are extremely cost-effective at high volumes. Premium services, while costing more, offer the advanced emotional depth and “white-glove” support that define high-end production.

Feature Depth vs Ease of Use

Developers building complex applications will prefer the depth of SSML and API controls. Conversely, creative professionals who need to move quickly will find more value in visual, timeline-based interfaces that handle the technical complexities in the background.

Integrations & Scalability

A platform’s ability to fit into a multi-tool pipeline is a major value driver. For developers, this means robust SDKs and low-latency streaming; for creators, it means easy export to video editors and CMS systems.

Security & Compliance Needs

In regulated industries like finance or healthcare, compliance is the ultimate gatekeeper. Platforms that offer on-premise deployment or strict data sovereignty options are often the only viable choice for these high-security environments.

Frequently Asked Questions (FAQs)

1. Can AI voices sound genuinely human?

Modern neural models have advanced to the point where they can mimic human breathing, pauses, and emotional inflections with incredible accuracy. In many professional contexts, listeners can no longer distinguish between high-end AI voices and human actors.

2. Is voice cloning legal?

Voice cloning is legal as long as you have the explicit permission of the original speaker. Major platforms have implemented strict verification processes to ensure that voices are not cloned without consent to prevent misuse and fraud.

3. What is SSML and why does it matter?

SSML stands for Speech Synthesis Markup Language. It is a standard way for developers to tell the AI exactly how to say something—where to pause, which words to emphasize, and what emotion to use. It is essential for high-quality, professional results.

4. How does real-time streaming work?

In real-time streaming, the audio is sent to the user in small chunks as it is being generated. This allows the playback to start almost instantly, even before the entire text has been processed by the AI.

5. Do I own the rights to the audio I generate?

Most professional platforms grant you full commercial rights to the audio as long as you have a paid subscription. However, free tiers often have restrictions on where and how the audio can be used.

6. Can TTS handle technical or medical terminology?

Yes, but it often requires a platform with a “Custom Lexicon” or “Pronunciation Library.” These features allow you to teach the AI how to correctly pronounce specialized words that aren’t in a standard dictionary.

7. How much does a professional TTS service cost?

Costs vary from a few cents per million characters on major cloud APIs to monthly subscriptions ranging from $15 to $100+ for specialized creative studios. The right choice depends on your volume and quality requirements.

8. Can I use AI voices for audiobooks?

Absolutely. Many modern audiobooks are now narrated by AI. Platforms like ElevenLabs and WellSaid Labs are specifically designed to maintain the consistent tone and energy required for long-form narration.

9. What is the difference between Standard and Neural voices?

Standard voices use older technology and sound more robotic. Neural voices use deep learning to simulate the actual physical process of human speech, resulting in a much more natural and fluid sound.

10. How do I choose between an API and a Studio?

Choose an API if you want to build voice into an app or website automatically. Choose a Studio if you are an individual or team manually creating audio files for videos, podcasts, or training modules.

Conclusion

The transition from robotic speech to lifelike human expression has turned Text-to-Speech into a transformative force across all digital industries. Choosing the right platform requires a deep understanding of your specific needs—whether you prioritize the raw technical power and global scale of the cloud giants or the nuanced emotional artistry of specialized creator tools. As AI continues to bridge the gap between human and machine communication, the most successful organizations will be those that leverage these tools to build more accessible, engaging, and localized experiences. By prioritizing interoperability and ethical usage, you can ensure that your voice-enabled projects are both technically sound and future-proof in this rapidly accelerating market.