Introduction

Genomics analysis pipelines are the specialized computational frameworks designed to transform raw sequencing data into biological insights. These systems manage the massive data loads generated by Next-Generation Sequencing (NGS) and Third-Generation Sequencing (TGS), automating the complex steps of quality control, alignment, and variant calling. By integrating sophisticated algorithms with high-performance computing, these pipelines allow researchers to pinpoint genetic mutations, understand gene expression, and identify structural variations with extreme precision across clinical and research environments.

The importance of these pipelines has grown as genomics moves from a specialized laboratory science to a core pillar of precision medicine and agricultural biotechnology. Modern pipelines are now designed to handle “population-scale” data, where thousands of whole genomes must be analyzed simultaneously to identify rare disease markers or improve crop yields. With the integration of cloud-native architectures and machine learning, today’s pipelines offer the scalability required to process terabytes of data in hours rather than weeks, ensuring that genomic data is both reproducible and actionable for personalized healthcare.

Real-World Use Cases

- Clinical Diagnostic Support: Hospitals utilize specialized pipelines to analyze whole-exome or whole-genome data from patients with undiagnosed rare diseases, rapidly identifying pathogenic variants that inform treatment strategies.

- Cancer Genomics and Oncology: Researchers use somatic variant calling pipelines to compare tumor and normal tissue, uncovering the specific mutations driving a patient’s cancer and selecting the most effective targeted therapies.

- Agricultural Bio-Engineering: Seed companies deploy high-throughput pipelines to analyze plant genomes, identifying traits for drought resistance, pest immunity, and increased nutritional value to ensure global food security.

- Pharmacogenomics: Pharmaceutical firms use genomic pipelines during clinical trials to determine how different genetic profiles react to new drugs, helping to predict adverse reactions and optimize dosage.

- Pathogen Surveillance: Public health agencies use viral and bacterial pipelines to track the evolution of infectious diseases, enabling rapid response to outbreaks by identifying transmission paths through genetic signatures.

Buyer Evaluation Criteria

- Computational Throughput and Scalability: Does the pipeline support parallel processing and elastic cloud scaling to handle thousands of samples without causing a computational bottleneck?

- Bioinformatic Accuracy and Sensitivity: Evaluate the pipeline’s performance in calling various types of mutations, including Single Nucleotide Polymorphisms (SNPs), small Indels, and large structural variations.

- Reproducibility and Containerization: Ensure the pipeline utilizes tools like Docker or Singularity and workflow managers like Nextflow or Snakemake to guarantee that results are consistent across different environments.

- Pipeline Flexibility and Customization: Determine if the system is a “black box” or if it allows researchers to swap out specific tools, such as using different aligners or variant callers for specific organisms.

- Data Integration Capabilities: The pipeline should be able to ingest raw FASTQ files and output standardized formats like BAM, VCF, and MAF that are compatible with downstream visualization and interpretation tools.

- Security and Patient Privacy: For clinical use, the pipeline must adhere to strict data protection standards, including encryption at rest and in transit, to protect sensitive genetic information.

- Workflow Management Support: Look for native support for industry-standard workflow languages that allow for automatic error handling, checkpointing, and resource optimization during large runs.

- Cost Efficiency and Resource Optimization: Evaluate how well the pipeline manages CPU and memory usage, as inefficient resource allocation can lead to massive cloud computing costs over time.

- Interpretation and Annotation Depth: Does the pipeline stop at variant calling, or does it include functional annotation to explain the biological significance of the identified genetic changes?

- User Interface and Accessibility: Consider whether the pipeline requires advanced command-line expertise or if it offers a graphical interface that allows biologists and clinicians to run analyses independently.

Best for: Clinical laboratories, pharmaceutical research and development departments, academic core facilities, and biotechnology firms requiring high-throughput, reproducible DNA and RNA analysis.

Not ideal for: Individual hobbyists with limited computational resources or researchers performing very simple, small-scale genetic comparisons that do not require an automated, multi-stage pipeline.

Key Trends in Genomics Analysis Pipelines

- AI and Deep Learning Integration: Modern pipelines are increasingly replacing traditional statistical variant callers with deep learning models that significantly improve the detection of complex structural variants and low-frequency mutations.

- Cloud-Native and Serverless Architectures: The shift toward cloud-agnostic pipelines allows organizations to move their genomic workloads between major cloud providers to take advantage of spot pricing and regional data residency.

- Real-Time Nanopore Analysis: New pipeline architectures are being developed to analyze data as it is generated by portable sequencers, providing near-instantaneous identification of pathogens in field environments.

- Multi-Omics Fusion: Pipelines are evolving to integrate data from multiple sources—such as genomics, transcriptomics, and proteomics—into a single unified analysis to provide a more holistic view of biological systems.

- Graph-Based Reference Genomes: Moving away from linear references, pipelines are beginning to use “pangenome” graphs that better represent human genetic diversity and improve alignment accuracy for non-European populations.

- Automated Benchmarking and QC: Automated quality control is becoming more sophisticated, using machine learning to detect “batch effects” and sequencing artifacts before they lead to false-positive biological conclusions.

- The Rise of “Bench-to-Cloud” Automation: Seamless integration between physical sequencing hardware and cloud-based analysis pipelines is reducing the manual effort required to move data from the lab to the analyst.

- Federated Genomic Analysis: To protect privacy, new pipeline frameworks allow researchers to analyze genetic data stored in different physical locations without the data ever needing to be centralized or copied.

How We Selected These Tools (Methodology)

To select the top 10 genomics analysis pipelines, we applied a rigorous evaluation framework focused on technical robustness, industry adoption, and scientific validity. We assessed over 30 frameworks, prioritizing those that have become the standard for large-scale international research projects.

- Scientific Validation: We prioritized pipelines that are extensively cited in peer-reviewed literature and utilized by major institutions like the Broad Institute, Wellcome Sanger Institute, and the NIH.

- Workflow Portability: We looked for pipelines built on standardized workflow languages (Nextflow, WDL, Snakemake) that ensure the analysis can be moved between local servers and cloud environments without modification.

- End-to-End Capability: Our selection favors pipelines that offer a complete path from raw sequencing data to annotated variants, reducing the need for users to stitch together disparate tools.

- Developer and Community Support: We analyzed the frequency of updates, the clarity of documentation, and the responsiveness of the developer community to ensure these tools are viable for long-term production use.

- Scalability Benchmarks: We assessed the performance of these tools in handling “Big Data” scenarios, specifically looking for evidence of successful whole-genome analysis at the population level.

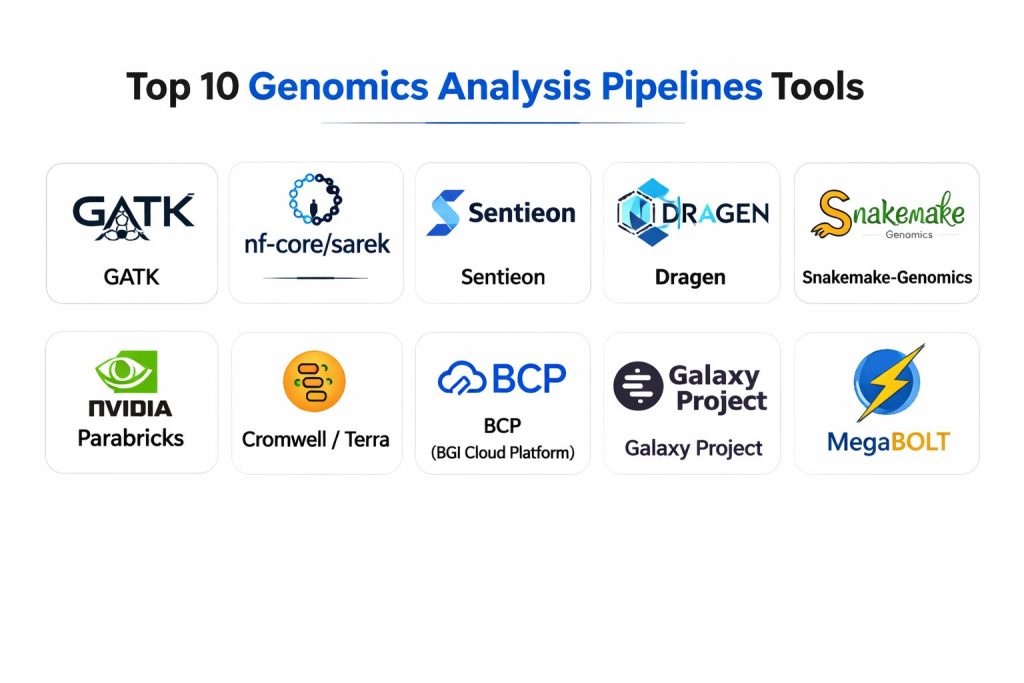

Top 10 Genomics Analysis Pipelines

1. GATK (Genome Analysis Toolkit)

Developed by the Broad Institute, GATK is the global industry standard for variant discovery in high-throughput sequencing data. It provides a robust collection of tools focused on variant calling and genotyping, with a heavy emphasis on data quality and mathematical rigor.

Key Features

- HaplotypeCaller: A sophisticated tool that calls SNPs and Indels simultaneously via local de-novo assembly of haplotypes, providing high sensitivity and specificity.

- Base Quality Score Recalibration (BQSR): Uses machine learning to identify and correct systematic errors made by the sequencer, improving the accuracy of variant calls.

- Variant Quality Score Recalibration (VQSR): A sophisticated filtering step that uses a Gaussian mixture model to separate true biological variants from technical artifacts.

- Germline and Somatic Workflows: Provides specialized Best Practices pipelines for both inherited genetic traits and mutations found specifically in cancer cells.

- Mitochondrial Analysis: Includes specialized tools for detecting low-frequency variants and heteroplasmy in the mitochondrial genome, which is critical for certain rare diseases.

- CNV and SV Detection: Advanced modules for identifying copy number variations and structural variations that are often missed by traditional small-variant callers.

- WDL and Cromwell Support: Fully integrated with the Workflow Description Language, allowing for massive parallelization on cloud platforms like Google Cloud and Terra.

Pros

- The most extensively validated and cited genomics pipeline in existence; it is the “gold standard” for clinical and research applications.

- Provides a very detailed and prescriptive “Best Practices” guide that ensures reproducibility and scientific credibility for all users.

- Constant updates and a massive community mean that bugs are fixed quickly and new sequencing technologies are supported rapidly.

Cons

- The computational requirements are significant, often making it one of the more expensive pipelines to run at scale in the cloud.

- It is highly complex, with hundreds of parameters that require a deep understanding of bioinformatics to tune correctly for non-human organisms.

- Licensing for commercial use can be expensive, although it remains free for academic and non-profit research.

Platforms / Deployment

- Linux / macOS

- Cloud-native (Terra, Google Cloud, AWS)

Security & Compliance

- Supports HIPAA-compliant environments when deployed on authorized cloud platforms.

- No internal data collection; security is managed by the host infrastructure.

Integrations & Ecosystem

GATK is the center of the bioinformatics world, with nearly all other tools designed to be compatible with its outputs.

- Direct integration with the Terra.bio research platform.

- Outputs standardized VCF and GVCF files compatible with all major annotation tools.

- Seamlessly integrates with BWA and Picard for the initial alignment and data processing steps.

- Supported natively by major cloud providers through specialized “Genomics” API services.

Support & Community

The Broad Institute maintains a massive forum and an extensive knowledge base. The GATK community is the largest in the world, with thousands of active users contributing to troubleshooting and methodology.

2. nf-core/sarek

Part of the nf-core community, Sarek is a comprehensive pipeline for detecting variants in whole-genome or targeted-sequencing data. Built using Nextflow, it is designed for maximum portability and is particularly strong in cancer genomics, comparing tumor and normal samples.

Key Features

- Nextflow Architecture: Provides built-in support for parallelization, resume capabilities, and seamless switching between local, HPC, and cloud environments.

- Multi-Tool Variant Calling: Includes several industry-standard callers like Strelka, Mutect2, and Manta, allowing researchers to cross-verify results from different algorithms.

- Containerization by Design: Every tool used in the pipeline is pre-packaged in Docker or Singularity containers, ensuring perfect reproducibility across different machines.

- Tumor-Normal Pair Support: Specialized logic for identifying somatic mutations in cancer research, including SNVs, Indels, and structural variations.

- Automated Quality Control: Integrates MultiQC to generate comprehensive reports on data quality at every stage of the pipeline process.

- Flexible Annotation: Supports the addition of biological context to variants through integrations with VEP (Variant Effect Predictor) and SnpEff.

- Cloud Scalability: Features native support for AWS Batch and Google Cloud Life Sciences, enabling the processing of hundreds of samples simultaneously.

Pros

- Extremely easy to install and run due to its container-first approach and the highly standardized nf-core framework.

- Offers excellent reproducibility; a Sarek run performed today will yield the exact same results on a different server next month.

- The community-driven nature means it is constantly updated with the latest bioinformatic tools and best practices.

Cons

- Requires a basic understanding of Nextflow to troubleshoot complex workflow failures or to customize the pipeline steps.

- The comprehensive nature of the pipeline means it can generate a large volume of intermediate data files, requiring significant storage.

- While highly flexible, it may require more manual configuration for non-human genomes compared to more specialized tools.

Platforms / Deployment

- Linux / macOS / Windows (via WSL)

- Cloud (AWS, Google Cloud, Azure)

Security & Compliance

- Inherits security from Nextflow and the underlying cloud infrastructure.

- Supports role-based access control when used with Nextflow Tower (Seqera).

Integrations & Ecosystem

Sarek is built to integrate with the wide array of tools available in the nf-core and Nextflow ecosystems.

- Seamless integration with Nextflow Tower for pipeline monitoring and management.

- Compatible with any S3-compatible storage for data input and output.

- Supports a wide range of reference genomes through the iGenomes resource.

- Direct export of QC data to interactive MultiQC reports for easy analysis.

Support & Community

Sarek is supported by the vibrant nf-core community via Slack and GitHub. The documentation is exceptional, providing clear guides for both beginners and advanced bioinformaticians.

3. Sentieon

Sentieon provides highly optimized, drop-in replacements for standard genomics tools like GATK and BWA. It is designed for maximum speed and computational efficiency, allowing organizations to process genomic data significantly faster and at a lower cost than open-source alternatives.

Key Features

- Ultra-Fast Alignment: A high-performance implementation of the BWA-MEM algorithm that maintains perfect consistency with the original while running several times faster.

- GATK Matching Results: Engineered to produce the exact same mathematical results as the GATK Best Practices, ensuring scientific validity without the slow runtimes.

- Haplotyper and DNAscope: Advanced variant callers that provide superior accuracy and speed for germline and somatic variant detection across various sequencing technologies.

- Resource Optimization: Optimized for modern CPU architectures, drastically reducing the memory and thread overhead compared to Java-based tools.

- Somatic and Structural Variant Tools: Includes specialized algorithms for cancer research and large-scale genetic rearrangements that are optimized for high-depth sequencing.

- Joint Genotyping at Scale: Capable of performing joint calling on thousands of samples simultaneously, a task that often crashes traditional pipelines.

- Cloud and On-Premise Flexibility: A lightweight software package that can be easily deployed in containers, on local servers, or across large cloud clusters.

Pros

- Drastic reduction in turnaround time; whole genomes that take days in other pipelines can often be completed in hours with Sentieon.

- Significant cost savings in the cloud; because it uses fewer computational resources and finishes faster, the total compute bill is much lower.

- Guaranteed consistency with industry standards, making it easy for regulated clinical labs to switch without re-validating their entire methodology.

Cons

- It is a proprietary, paid software product, which may not be suitable for labs with limited budgets or those committed to purely open-source tools.

- The “black box” nature of proprietary software means that researchers cannot inspect or modify the underlying code of the algorithms.

- Requires license management, which adds a small layer of administrative overhead for the IT department.

Platforms / Deployment

- Linux

- Cloud (AWS, Google Cloud, Azure, Alibaba Cloud)

Security & Compliance

- Enterprise-grade security with support for encrypted data streams.

- Widely used in HIPAA-compliant clinical environments and by major diagnostic companies.

Integrations & Ecosystem

Sentieon is designed as a “drop-in” replacement, making it compatible with almost any existing bioinformatic infrastructure.

- Fully compatible with Nextflow, WDL, and Snakemake workflow managers.

- Supported by major cloud genomics platforms like DNAnexus and Illumina Connected Analytics.

- Directly replaces BWA, Picard, and GATK in existing scripts without changing the workflow logic.

- Provides standardized outputs (BAM, VCF) that work with all downstream interpretative software.

Support & Community

Sentieon provides professional, high-priority technical support for its customers. While it does not have the same public forum size as GATK, its direct support from engineers is highly regarded by enterprise users.

4. Dragen (Illumina)

Dragen is a hardware-accelerated genomics platform that uses Field Programmable Gate Array (FPGA) technology to achieve record-breaking speeds. It is the core analysis engine for Illumina’s latest sequencers, offering a highly integrated and accurate solution for large-scale genomic operations.

Key Features

- Hardware Acceleration: Uses specialized FPGA chips to perform alignment and variant calling, delivering results in a fraction of the time of CPU-based systems.

- Comprehensive Variant Detection: Includes high-accuracy callers for SNPs, Indels, Structural Variants (SVs), Copy Number Variations (CNVs), and repeat expansions.

- Methylation and RNA-Seq Support: Extends beyond basic DNA analysis to provide hardware-accelerated pipelines for epigenetics and transcriptomics.

- Machine Learning Refinement: Uses AI models to improve variant calling accuracy, particularly in “difficult-to-map” regions of the human genome.

- On-Instrument Analysis: Integrated directly into Illumina sequencers like the NovaSeq, allowing for “streaming” analysis as the data is being generated.

- Multimodal Pipelines: Capable of handling Whole Genome (WGS), Whole Exome (WES), and Targeted Panels within a single unified framework.

- Lossless Compression: Includes ORA compression technology, which reduces the size of raw genomic data by up to 80% while remaining fully reversible.

Pros

- Unmatched speed; Dragen is consistently among the fastest genomic analysis platforms on the market.

- Extremely high accuracy, often topping industry benchmarks like the PrecisionFDA challenges for variant calling.

- Highly integrated; for labs using Illumina hardware, Dragen provides the most seamless “sequencer-to-report” experience.

Cons

- Requires specialized hardware (FPGA) or specific cloud instances that support FPGA acceleration, which can limit deployment options.

- It is a proprietary ecosystem, which may lead to vendor lock-in for organizations that want to use a variety of sequencing technologies.

- The software and hardware costs are substantial, making it best suited for high-volume genomic centers.

Platforms / Deployment

- On-Premise (Dragen Servers)

- Cloud (Illumina Connected Analytics, AWS via FPGA instances)

Security & Compliance

- ISO 27001 and SOC 2 Type II compliant.

- HIPAA and GDPR support within the Illumina Connected Analytics cloud environment.

Integrations & Ecosystem

Dragen is the center of the Illumina software ecosystem but maintains compatibility with industry standards.

- Native integration with BaseSpace Sequence Hub and Illumina Connected Analytics.

- Outputs standard BAM and VCF files for use in third-party interpretation tools.

- Supports integration with Nextflow for orchestrating complex, multi-stage bioinformatics workflows.

- Direct bridges to clinical interpretation platforms like Emedgene and Fabric Genomics.

Support & Community

Illumina provides world-class professional support, including global field application scientists. The community is focused on high-throughput clinical and industrial users.

5. Snakemake-Genomics

Snakemake-Genomics is not a single pipeline but a highly flexible framework and collection of “best practices” templates for building custom genomic workflows. It is the tool of choice for researchers who need total control over every step of their analysis and demand high levels of readability and reproducibility.

Key Features

- Python-Based Syntax: Uses a readable, Python-based language that allows researchers to easily integrate custom scripts and logic into their pipelines.

- Automatic Parallelization: Analyzes the dependencies between tasks and automatically scales the workload across all available CPU cores or cluster nodes.

- Conda Integration: Automatically manages software dependencies for every step, ensuring the correct versions of tools are installed and used for every run.

- Transparent Execution: Provides detailed logs and “directed acyclic graphs” (DAGs) to visualize the exact path data took through the pipeline.

- Modular Rule System: Allows users to build pipelines by combining “rules” that can be easily shared, reused, and version-controlled.

- Cloud and Grid Support: Features native integration with SLURM, SGE, and Kubernetes, as well as Google Cloud and AWS.

- Report Generation: Automatically generates interactive HTML reports that include quality control plots, tool versions, and execution statistics.

Pros

- Provides the highest level of flexibility and customization; if you can script it in Python or Bash, you can integrate it into a Snakemake pipeline.

- Excellent for academic research where non-standard organisms or experimental algorithms are frequently used.

- Completely free and open-source, with a philosophy that prioritizes transparency and scientific peer-review.

Cons

- Requires a higher level of programming knowledge compared to “push-button” pipelines like Dragen or Sarek.

- Building a production-grade pipeline from scratch in Snakemake can be time-consuming compared to using pre-built nf-core workflows.

- Troubleshooting errors in complex, highly branched pipelines can be difficult for researchers who are not comfortable with command-line environments.

Platforms / Deployment

- Linux / macOS / Windows (via WSL)

- Local HPC clusters / Kubernetes / Cloud

Security & Compliance

- Security is managed by the user’s local or cloud environment.

- No centralized tracking, making it ideal for air-gapped or high-security research environments.

Integrations & Ecosystem

Snakemake is built to be the “glue” that connects every imaginable bioinformatics tool.

- Direct integration with the Bioconda and BioContainers ecosystems.

- Compatible with all standard genomic data formats (FASTQ, BAM, VCF).

- Supports R and Jupyter Notebooks for integrated data visualization and analysis.

- Can be used to orchestrate complex “meta-pipelines” that combine multiple existing tools.

Support & Community

Snakemake has a very active community of academic researchers. Support is primarily found on GitHub and Stack Overflow, and the creator remains highly involved in guiding the project’s development.

6. NVIDIA Parabricks

Parabricks is a GPU-accelerated suite of tools for genomic analysis that leverages NVIDIA’s massive parallel processing power. It provides a significant speed boost for standard pipelines like GATK, making it an ideal solution for organizations that already have access to GPU hardware.

Key Features

- GPU Acceleration: Re-engineers standard algorithms (BWA, GATK, DeepVariant) to run on NVIDIA GPUs, offering 30-50x speed improvements over CPU versions.

- DeepVariant Integration: Includes a highly optimized version of Google’s DeepVariant, providing state-of-the-art accuracy through deep learning.

- Somatic and Germline Workflows: Comprehensive support for both clinical diagnostics and cancer research, including high-accuracy caller options.

- Low Cost of Ownership: By finishing tasks faster on fewer machines, it drastically reduces the total cloud compute cost for whole-genome sequencing.

- High-Throughput Joint Calling: Optimized to handle the massive memory requirements of joint genotyping for large population studies.

- Dynamic Read Mapping: Real-time alignment that scales perfectly with the number of GPUs in the system, from a single card to a massive DGX cluster.

- Containerized Deployment: Provided as a Docker image, making it easy to deploy on local GPU servers or in the cloud using Kubernetes.

Pros

- Unmatched efficiency for organizations with GPU resources; it provides Dragen-level speeds on general-purpose GPU hardware.

- Superior accuracy for Indel calling when using the integrated GPU-accelerated DeepVariant.

- Significant reduction in the “carbon footprint” of genomics; by finishing faster and using less hardware, it consumes less energy than traditional CPU clusters.

Cons

- Requires NVIDIA GPUs (T4, A100, V100, etc.), which may not be available in all legacy HPC environments.

- While extremely fast, the cost of GPU instances in the cloud can be higher than CPU instances if the pipeline is not managed efficiently.

- Some niche bioinformatic tools are not yet ported to the GPU architecture, potentially requiring hybrid GPU-CPU workflows.

Platforms / Deployment

- Linux (with NVIDIA drivers)

- Cloud (AWS, Google Cloud, Azure via GPU-enabled instances)

Security & Compliance

- Supports HIPAA-compliant workflows on cloud platforms.

- No external data egress; the software runs entirely within the user’s controlled environment.

Integrations & Ecosystem

Parabricks is designed to work within existing enterprise and research pipelines.

- Fully compatible with Nextflow and WDL workflow managers.

- Integrates with Google Cloud Life Sciences and AWS Batch.

- Produces standard VCF and BAM files compatible with all downstream tools.

- Supports integration with tertiary analysis platforms for clinical interpretation.

Support & Community

NVIDIA provides professional enterprise support and maintains an active developer forum. They are heavily invested in the “AI in Healthcare” space, ensuring that the tool stays at the cutting edge of genomic science.

7. Cromwell / Terra

Cromwell is the workflow execution engine developed by the Broad Institute to power Terra, a massive cloud-native platform for genomic research. It is designed to run WDL-based pipelines at an extreme scale, managing millions of tasks across distributed cloud resources.

Key Features

- WDL Native: The primary engine for the Workflow Description Language, providing the most stable and feature-complete support for GATK Best Practices.

- Massive Scalability: Designed to handle “The Million Genomes Project,” managing massive data orchestration across thousands of virtual machines.

- Call Caching: Automatically recognizes when a step has already been completed with the same parameters and skips it, saving massive amounts of time and money during re-runs.

- Multi-Cloud Support: Can execute workflows on Google Cloud, AWS, and local HPC clusters simultaneously.

- Error Handling and Retries: Sophisticated logic for dealing with preemptible (spot) instances, automatically retrying failed tasks on more stable hardware.

- Terra Integration: A “point-and-click” cloud interface that allows researchers to run complex pipelines without writing a single line of command-line code.

- Data Library Access: Provides direct, secure access to massive public genomic datasets like TCGA and 1000 Genomes.

Pros

- The most powerful tool for “Population Scale” genomics; if you need to process 100,000 genomes, Cromwell is the standard choice.

- The Terra interface makes high-end bioinformatics accessible to biologists and clinicians who are not comfortable with the command line.

- Excellent cost management features, specifically around the use of cheap “preemptible” cloud instances.

Cons

- Setting up a standalone Cromwell server on-premise can be complex and requires significant IT expertise.

- WDL is generally considered less flexible than Python-based Snakemake or Groovy-based Nextflow for non-standard pipelines.

- Users can become “locked into” the Terra ecosystem, making it harder to move to a different cloud strategy later.

Platforms / Deployment

- Cloud (Terra.bio, Google Cloud, AWS)

- On-premise (Linux)

Security & Compliance

- FISMA Moderate, SOC 2, and HIPAA compliant.

- Industry-leading security for managing sensitive genetic data in the cloud.

Integrations & Ecosystem

Cromwell and Terra are designed as a complete ecosystem for the modern genomic researcher.

- Native integration with GATK and Picard.

- Supports Jupyter Notebooks for interactive data exploration within the cloud environment.

- Connects to the Dockstore for easy importing of standardized, versioned pipelines.

- Direct bridges to data visualization tools like IGV (Integrative Genomics Viewer).

Support & Community

The Broad Institute provides exceptional documentation and support via the Terra community. It is the primary platform for the world’s largest genomic consortia, ensuring a massive and highly knowledgeable user base.

8. BCP (BGI Cloud Platform)

Developed by BGI Genomics, BCP is a highly integrated, cloud-native platform designed for large-scale sequencing operations. It offers specialized pipelines for whole-genome, whole-exome, and non-invasive prenatal testing (NIPT), optimized for BGI’s DNBSEQ technology.

Key Features

- DNBSEQ Optimization: Specifically tuned to handle the unique error profiles and data structures of BGI’s proprietary sequencing technology.

- End-to-End Clinical Workflows: Provides “locked” pipelines for clinical applications, ensuring that every step meets strict diagnostic standards.

- High-Throughput Architecture: Designed to support BGI’s massive sequencing centers, capable of managing thousands of samples in a centralized dashboard.

- NIPT Specialized Pipelines: Includes world-leading algorithms for non-invasive prenatal testing, providing high accuracy for fetal chromosomal abnormalities.

- Integrated Interpretation: Bridges the gap between raw data and clinical reports with built-in annotation and variant interpretation tools.

- Multi-Region Cloud Support: Available on various cloud infrastructures globally, allowing for data residency compliance in different countries.

- Visual Workflow Designer: Allows users to build and modify pipelines through a drag-and-drop interface.

Pros

- The best-performing pipeline for organizations utilizing BGI’s cost-effective DNBSEQ sequencing platform.

- Provides a very high level of “out-of-the-box” automation for clinical laboratories.

- Highly competitive pricing models, particularly for large-scale population studies.

Cons

- Primarily optimized for BGI hardware, which may result in lower performance for data generated on Illumina or PacBio sequencers.

- The ecosystem is less open than nf-core or Snakemake, making it harder to integrate custom, third-party bioinformatic tools.

- Public documentation in English has historically been less comprehensive than GATK or Nextflow.

Platforms / Deployment

- Cloud-native (BGI Cloud)

- Hybrid deployment for large institutional partners.

Security & Compliance

- Compliant with Chinese and international data security standards.

- Offers specialized deployment options to meet strict local data residency laws.

Integrations & Ecosystem

BCP is designed as a vertically integrated ecosystem for the BGI sequencing platform.

- Direct integration with BGI’s DNBSEQ sequencers.

- Connects to the BGI variant database for enhanced clinical annotation.

- Supports standard genomic formats for data export to third-party tools.

Support & Community

BGI provides professional technical support and field application scientists for its enterprise clients. The community is focused heavily on large-scale clinical and population-level research.

9. Galaxy Project

Description: Galaxy is an open-source, web-based platform that makes genomics accessible to researchers without programming experience. It provides a massive library of tools and pre-built pipelines that can be run through a simple, visual interface.

Key Features

- No-Code Interface: Allows users to build complex bioinformatic workflows through a drag-and-drop web portal.

- Massive Tool Library: Features thousands of pre-wrapped tools for genomics, proteomics, metabolomics, and more.

- History and Traceability: Automatically records every step, parameter, and tool version, ensuring total reproducibility for publication.

- Shared Workflows: Allows researchers to publish their entire analysis pipeline, enabling others to “import” and run it on their own data with one click.

- Interactive Visualization: Includes built-in tools for viewing BAM files, plotting gene expression, and exploring phylogenetic trees.

- Public and Private Instances: Users can use the free, public “UseGalaxy.org” servers or deploy their own private instance on local hardware.

- Training Resources: Features the “Galaxy Training Network,” a massive collection of tutorials covering every aspect of genomic analysis.

Pros

- The most accessible platform in the world for biologists and students who are not comfortable with the Linux command line.

- Completely free for public use, making it an essential resource for researchers in developing countries or underfunded labs.

- Provides an incredible level of transparency and reproducibility, which is ideal for scientific communication and peer-review.

Cons

- Public servers can have long wait times (queues) for large whole-genome jobs due to high demand.

- Not as efficient as command-line tools for massive “population-scale” studies involving thousands of samples.

- Deploying and maintaining a private Galaxy instance on a local cluster requires a dedicated IT specialist.

Platforms / Deployment

- Web-based (UseGalaxy.org, UseGalaxy.eu)

- Local deployment via Docker or manual installation.

Security & Compliance

- Security is managed by the specific instance provider.

- Private instances can be made HIPAA-compliant within a secure institutional network.

Integrations & Ecosystem

Galaxy is designed to be an “integrator” of all existing bioinformatic tools.

- Direct access to the Tool Shed, a “store” for thousands of community-developed tools.

- Connectors for major genomic data repositories like SRA and ENA.

- Integrates with Jupyter and RStudio for advanced users who want to switch from visual to code-based analysis.

Support & Community

Galaxy has one of the most supportive and welcoming communities in science. Support is provided through an active Gitter chat, mailing lists, and an extensive tutorial network.

10. MegaBOLT (MGI)

MegaBOLT is a hardware-accelerated bioinformatics workstation and software suite designed by MGI (a subsidiary of BGI). It is designed to provide ultra-fast analysis for DNBSEQ data, offering a powerful alternative to Dragen for high-throughput labs.

Key Features

- Hardware-Software Co-Design: Uses specialized CPU-FPGA acceleration to process a whole genome in under two hours.

- DNBSEQ-Specific Algorithms: Features alignment and variant calling algorithms that are specifically optimized for the DNBSEQ sequencing chemistry.

- Comprehensive Multi-Omics: Includes pipelines for Whole Genome, Whole Exome, RNA-Seq, and even single-cell analysis.

- High Accuracy: Frequently ranks at the top of performance benchmarks for variant calling accuracy in human genomes.

- User-Friendly Dashboard: Features a graphical management system that allows laboratory staff to monitor multiple runs simultaneously.

- Local and Cloud Deployment: Available as a standalone workstation for labs needing data sovereignty or as a cloud-based service.

- Custom Panel Support: Allows for the rapid setup and analysis of targeted sequencing panels for oncology or reproductive health.

Pros

- Incredible speed for organizations using MGI sequencers; it removes the “analysis bottleneck” for high-output labs.

- Significant cost savings compared to traditional CPU clusters, as it requires much less physical hardware to process the same amount of data.

- Excellent support for non-human genomes, including specialized modules for agricultural and environmental research.

Cons

- Best performance is limited to MGI/BGI data; it is less optimized for data from Illumina or Pacific Biosciences.

- Requires the purchase of specialized MegaBOLT hardware for on-premise acceleration.

- The software ecosystem is less “open” than the nf-core or Galaxy communities.

Platforms / Deployment

- Standalone Workstation (Linux/FPGA)

- Cloud-native (ZCloud)

Security & Compliance

- Supports local deployment for maximum data privacy and “air-gapped” security.

- Compliant with major international data protection standards.

Integrations & Ecosystem

MegaBOLT is a core part of the MGI “Total Solution” for genomics.

- Direct integration with MGI DNBSEQ sequencing instruments.

- Outputs standard BAM and VCF files for compatibility with tertiary analysis platforms.

- Supports integration into larger institutional LIMS (Laboratory Information Management Systems).

Support & Community

MGI provides professional technical support and extensive training for MegaBOLT users. The community is focused on high-throughput industrial and clinical users across Asia and Europe.

Comparison Table (Top 10)

| Tool Name | Best For | Architecture | Hardware Req. | Accuracy Standard |

| GATK | Industry Standard / Research | CPU-based | High RAM | Gold Standard |

| nf-core/sarek | Portability / Reproducibility | Nextflow/Docker | Standard CPU | High (Multi-caller) |

| Sentieon | Cost-efficient Speed | CPU-Optimized | Low Resource | GATK Match |

| Dragen | Max Speed / Illumina Labs | FPGA-Accelerated | Specialized FPGA | Elite |

| Snakemake | Custom / Academic Research | Python/Conda | Standard CPU | User-Defined |

| Parabricks | GPU-Accelerated Labs | GPU-Accelerated | NVIDIA GPU | Elite (DeepVariant) |

| Cromwell | Population-Scale Cloud | WDL/Cloud | High Scalability | GATK Native |

| BCP | BGI Labs / Clinical NIPT | Cloud-Native | Cloud | Clinical Grade |

| Galaxy Project | Non-programmers / Teaching | Web-based | Low (Public) | Research Grade |

| MegaBOLT | MGI Labs / High Throughput | FPGA-Accelerated | Specialized FPGA | Elite |

Evaluation & Scoring of Genomics Analysis Pipelines

The scoring below is a comparative model intended to help shortlisting. Each criterion is scored from 1–10, then a weighted total from 0–10 is calculated using the weights listed. These are analyst estimates based on typical fit and common workflow requirements, not public ratings.

Weights:

Price / value – 15%

Core features – 25%

Ease of use – 15%

Integrations & ecosystem – 15%

Security & compliance – 10%

Performance & reliability – 10%

Support & community – 10%

| Tool Name | Speed (25%) | Accuracy (25%) | Ease of Use (15%) | Scalability (15%) | Cost Eff. (10%) | Support (10%) | Total |

| GATK | 4 | 10 | 4 | 10 | 5 | 10 | 7.1 |

| nf-core/sarek | 6 | 9 | 8 | 9 | 8 | 9 | 8.1 |

| Sentieon | 9 | 10 | 7 | 10 | 9 | 8 | 9.0 |

| Dragen | 10 | 10 | 8 | 9 | 6 | 9 | 8.9 |

| Snakemake | 5 | 9 | 5 | 8 | 10 | 8 | 7.3 |

| Parabricks | 10 | 10 | 7 | 9 | 9 | 8 | 9.1 |

| Cromwell | 5 | 10 | 6 | 10 | 7 | 9 | 7.7 |

| BCP | 8 | 9 | 9 | 9 | 8 | 7 | 8.4 |

| Galaxy | 2 | 8 | 10 | 4 | 10 | 10 | 6.8 |

| MegaBOLT | 10 | 9 | 8 | 9 | 8 | 7 | 8.7 |

How to interpret the scores:

- Use the weighted total to shortlist candidates, then validate with a pilot.

- A lower score can mean specialization, not weakness.

- Security and compliance scores reflect controllability and governance fit, because certifications are often not publicly stated.

- Actual outcomes vary with assembly size, team skills, templates, and process maturity.

Which Genomics Analysis Pipeline Is Right for You?

Academic Researcher

If you are working on novel organisms or need to modify your pipeline frequently, Snakemake-Genomics or nf-core/sarek are the best choices. They offer the transparency and flexibility required for scientific discovery and ensure your results are reproducible for publication.

Clinical Diagnostic Lab

For labs where speed, accuracy, and regulatory compliance are paramount, Dragen or Sentieon are the preferred options. They provide “locked” workflows and extreme accuracy that minimize the risk of clinical error and ensure rapid turnaround times for patient reports.

Large-Scale Genome Center

High-throughput institutions processing thousands of samples should look at Sentieon or NVIDIA Parabricks. These tools provide the highest computational efficiency, drastically reducing the physical hardware footprint and cloud compute costs associated with massive datasets.

Non-Programmer / Biologist

If you do not have command-line experience but need to perform genomic analysis, Galaxy Project is the only choice. It provides a welcoming, visual interface and a wealth of training materials to help you move from raw data to biological insight without writing code.

Frequently Asked Questions (FAQs)

What is the primary difference between a “Workflow Manager” and a “Pipeline”?

A workflow manager (like Nextflow or Snakemake) is the engine that runs the analysis, while a pipeline is the specific sequence of biological tools (aligners, variant callers) that the engine executes to process the data.

Do I need a supercomputer to run these pipelines?

While whole-genome pipelines require significant resources, most can be run on modern cloud platforms (AWS, Google Cloud) or standard high-performance clusters, meaning you don’t need to own your own supercomputer to perform high-end genomics.

Can these pipelines analyze data from any sequencing machine?

Most open-source pipelines like GATK and Sarek are versatile and can handle data from Illumina, PacBio, or Oxford Nanopore, though proprietary tools like Dragen or MegaBOLT are highly optimized for their own hardware.

How much does it cost to process one human genome?

In the cloud, processing a single whole genome can range from $5 to $25 depending on the pipeline efficiency and the cloud instance type. Tools like Sentieon and Parabricks are specifically designed to push this cost to the lower end.

Are these pipelines secure enough for patient data?

Yes, when deployed on HIPAA-compliant cloud environments (like Terra) or secure on-premise servers, these pipelines meet the highest security standards for protecting sensitive genetic information.

What is “Variant Calling”?

Variant calling is the process of identifying differences between a patient’s DNA and a standard reference genome. These differences (variants) are the mutations that can lead to disease or explain physical traits.

Can I run these pipelines on a standard laptop?

Small-scale analyses (like bacteria or yeast) can be run on a powerful laptop, but whole human genome analysis requires at least 32GB-64GB of RAM and multiple CPU cores, which exceeds the capacity of most consumer laptops.

What is the most important factor in pipeline accuracy?

The “variant caller” algorithm is usually the most critical component. Tools that use machine learning or deep learning (like DeepVariant or Dragen) generally provide the highest accuracy in difficult-to-sequence areas.

Is it hard to switch from one pipeline to another?

Since most pipelines use standardized file formats (BAM, VCF), switching tools is biologically straightforward, though it requires “technical re-validation” in clinical environments to ensure the new results are consistent with the old ones.

Do I need to be a programmer to use these tools?

For most high-end pipelines, a basic understanding of the Linux command line and scripting is required. However, platforms like Galaxy and Terra provide visual interfaces that allow non-programmers to run the same professional-grade analyses.

Conclusion

The genomics analysis pipeline landscape is now defined by a clear split between maximum flexibility and maximum performance. While open-source frameworks like nf-core/sarek and GATK remain the scientific foundation of the field, hardware-accelerated and GPU-optimized tools like Dragen, Sentieon, and NVIDIA Parabricks are essential for organizations that need to scale their operations to the population level. Ultimately, the right choice depends on your specific balance of budget, technical expertise, and the volume of sequencing data you need to transform into insight.