A step-by-step blueprint of tools, techniques, processes, and practices (from a “20 years in the trenches” lens)

1) First, get the terms right (because teams lose months here)

In my experience, most “CD” confusion starts with language:

- Continuous Delivery means every change can be deployed safely at any time (pipeline keeps the system deployable).

- Continuous Deployment means every change that passes the pipeline automatically goes to production, often many times per day.

That distinction matters because continuous deployment is not a switch you flip—it’s the outcome of disciplined engineering, guardrails, and measurement.

2) What “continuous deployment-ready” actually looks like

When a team is truly doing continuous deployment, a few things are always true:

- Main is always releasable (small changes, frequent merges).

- Deployments are routine, boring, and reversible (rollbacks are standard, not heroic).

- Risk is managed by automation (tests + progressive rollout + observability).

- Performance is measured (you can prove if you’re improving or getting worse).

DORA’s four key metrics are the simplest common language I’ve seen work across organizations: deployment frequency, lead time for changes, change failure rate, MTTR. ()

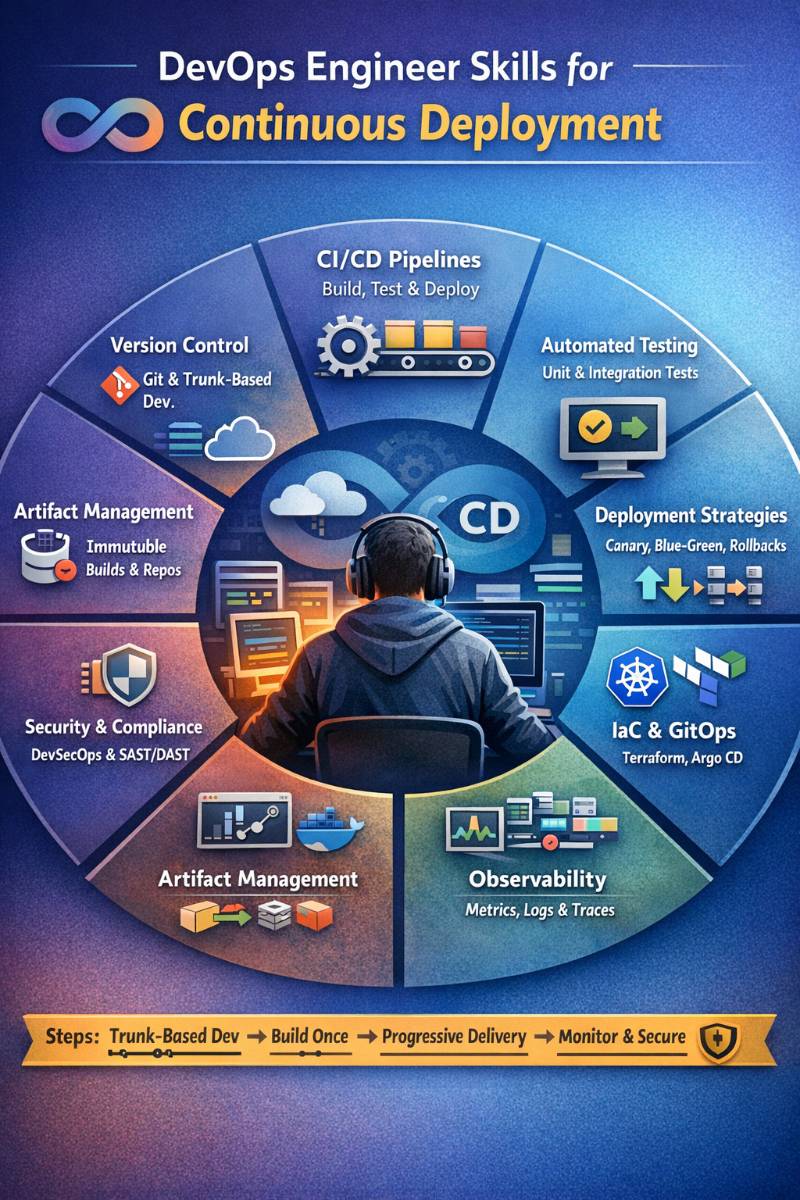

3) The DevOps skill map for continuous deployment

Here’s the skills stack I expect a DevOps engineer (or platform/SRE in many orgs) to be strong in.

A. Version control & integration discipline

- Trunk-based development (or close to it): short-lived branches, frequent merges, reduce integration hell. ()

- Pull request hygiene: review standards, small PRs, ownership

- Release-friendly practices: feature flags, backward-compatible schema changes

B. Pipeline engineering (CI as a product)

- Pipeline as code, repeatable builds, caching strategy

- Artifact immutability: “build once, promote many”

- Secret handling: ephemeral credentials, least privilege, no secrets in logs

C. Automated testing strategy (gating without slowing teams to death)

- Test pyramid thinking (unit → integration → e2e), contract testing where relevant

- Ephemeral preview environments for PR validation

- Deterministic builds + deterministic tests (flake reduction is a real skill)

D. Deployment & release engineering

- Rolling updates vs canary vs blue/green

- Health checks and readiness/liveness correctness (Kubernetes teams: this is life)

- Automated rollback strategies

- Progressive delivery + safe experimentation

Kubernetes natively supports rolling update patterns in Deployments, and the mechanics matter if you want safe automation. ()

E. GitOps & environment state management (modern default for K8s)

- GitOps basics: desired state in Git + reconciler applies it

- Tools like Argo CD / Flux for cluster delivery and drift control ()

- Understanding push vs pull deployment models, RBAC implications

F. Observability & release validation

If you can’t “see” what changed in production, continuous deployment becomes reckless.

- Metrics, logs, traces as first-class signals (not an afterthought)

- Standardized instrumentation approach (OpenTelemetry is the most broadly adopted open framework here). ()

- SLOs / error budgets: release decisions tied to reliability signals (even if lightweight at first)

G. Security & supply chain integrity (DevSecOps is not optional anymore)

- Pipeline hardening and access control (CI/CD is a juicy target). ()

- Software supply chain security: provenance, trusted builds, signing, SBOM

- Align to recognized guidance like NIST SP 800-204D for securing DevSecOps CI/CD pipelines (especially cloud-native). ()

- Understand frameworks like SLSA security levels to mature integrity guarantees over time. ()

4) Step-by-step approach to build continuous deployment (the blueprint I use)

Step 1 — Define “release policy” and a realistic deployment target

Before tools, decide:

- What is “production” (single region? multi-region? active-active?)

- Allowed risk profile (B2B internal tool ≠ consumer payments system)

- “Definition of Done” for a change to be auto-deployed

- Rollback expectations (time to rollback, blast radius limits)

Then commit to measuring with DORA metrics; otherwise “CD progress” becomes a debate, not a fact. ()

Step 2 — Make the main branch releasable (this is the real foundation)

Continuous deployment collapses if your integration model is “merge at the end”.

Practices

- Trunk-based development (short-lived branches, frequent merges) ()

- Enforce fast PR checks: lint + unit tests + minimal integration tests

- Feature flags for incomplete work (ship code dark, enable later)

Tools

- GitHub / GitLab / Bitbucket

- Feature flags: LaunchDarkly / Unleash / Split / Cloud provider equivalents (choose based on scale & governance)

Step 3 — Build once, produce immutable artifacts, and store them properly

If you rebuild the same commit for dev/stage/prod, you’ll eventually deploy something you never tested.

Practices

- One artifact per commit (immutable)

- Semantic versioning or commit-based tags

- Artifact repository becomes part of your supply chain

Tools

- Container registry: ECR / GCR / ACR / Harbor / Artifactory

- Artifact repos: Nexus / Artifactory

- SBOM generation (if you’re serious about security posture): CycloneDX tooling, Syft (ecosystem choice)

Step 4 — Engineer the CI pipeline as a product (speed + trust)

This is where many teams create slow, flaky pipelines that become bypassed.

Practices

- Pipeline as code

- Parallelize test stages

- Cache dependencies

- Fail fast; don’t run expensive tests if build already failed

- Treat flaky tests as incidents (because they destroy trust)

Tools (common choices)

- GitHub Actions / GitLab CI / Jenkins / CircleCI / Azure DevOps

- For Kubernetes-native CI: Tekton (when you want pipelines running inside clusters)

Step 5 — Standardize environments using IaC and configuration discipline

Continuous deployment dies when environments drift or are hand-patched.

Practices

- Infrastructure as Code (everything repeatable)

- Separate config from code, but keep config versioned

- Use the same deployment mechanism across environments

Tools

- Terraform / OpenTofu / Pulumi / CloudFormation / Bicep

- Config delivery: Helm / Kustomize

- Secrets: Vault / cloud secrets managers + workload identity patterns

Step 6 — Choose a deployment control plane: Push CD or GitOps CD

This is a key architecture decision:

Option A: Push-based CD

CI system pushes deployments to the target environment. Works, but can lead to access sprawl.

Option B: GitOps (common for Kubernetes)

Cluster reconciler pulls desired state from Git and applies it; Git is the source of truth. CNCF highlights the Argo CD vs Flux ecosystem as the dominant GitOps toolkit for Kubernetes. ()

My bias (from experience):

If you’re on Kubernetes and you care about auditability + drift control, GitOps is usually the cleanest operational model.

Step 7 — Implement safe rollout strategies (progressive delivery)

This is where “continuous deployment” becomes safe enough to be automatic.

Core strategies

- Rolling updates (baseline for many services) ()

- Blue/Green (fast rollback by switching traffic) ()

- Canary (small % traffic first, verify, then expand) ()

- Feature flags (deploy code, control exposure without redeploy)

Progressive delivery is widely recommended because it reduces blast radius and makes rollbacks routine. ()

Tools

- Kubernetes-native: Argo Rollouts (blue/green, canary) ()

- Service mesh (when needed): Istio / Linkerd for traffic shaping

- Feature flags: LaunchDarkly / Unleash, etc.

Step 8 — Observability and automated release validation (don’t deploy blind)

If you automate deployments, you must automate confidence.

Practices

- Instrument services with metrics/logs/traces

- Define “release health” signals: error rate, latency, saturation, key business KPIs

- Gate canary promotion on telemetry trends

- Keep rollback automatic for known bad states

OpenTelemetry is explicitly designed as a vendor-neutral way to generate and export telemetry (traces/metrics/logs). ()

Tools

- Metrics: Prometheus + Grafana (or cloud-native equivalents)

- Tracing: Jaeger / Tempo / vendor backends

- Logging: Loki / ELK / cloud logging

- OTel Collector for consistent enrichment and exporting ()

Step 9 — Secure the pipeline and the supply chain (because attackers love CI/CD)

I’ve seen orgs spend millions on app security and forget the pipeline—until a breach proves CI/CD is part of prod.

Practices

- Least privilege for CI runners, short-lived credentials, isolated build environments

- Dependency scanning, container scanning, IaC scanning

- Artifact signing and provenance (especially for regulated/high-risk systems)

- Protect against pipeline tampering: approvals for workflow changes, restricted secrets, segregated environments

OWASP provides CI/CD security best practices and also maintains a “Top 10 CI/CD Security Risks” project—use those as a checklist. ()

NIST SP 800-204D is directly focused on software supply chain security in DevSecOps CI/CD pipelines for cloud-native systems. ()

SLSA levels provide a practical maturity path for integrity guarantees. ()

Step 10 — Close the loop with metrics (continuous deployment without measurement is theater)

Track:

- Deployment frequency

- Lead time

- Change failure rate

- MTTR

DORA defines these metrics and they’ve become the common language across engineering leadership. ()

Then run a monthly “pipeline retrospective”:

- What slowed us down?

- What caused rollbacks?

- What’s noisy/flaky?

- Which guardrail prevented an incident?

That’s how you mature without burning out teams.

5) Toolchain reference architecture (practical, not dogmatic)

| Layer | What you’re solving | Typical tools |

|---|---|---|

| SCM + PR | small merges, reviews, traceability | GitHub / GitLab / Bitbucket |

| CI | build/test/package | GitHub Actions, GitLab CI, Jenkins, CircleCI, Azure DevOps |

| Artifacts | immutable build outputs | ECR/GCR/ACR, Harbor, Artifactory, Nexus |

| IaC | reproducible infra | Terraform/OpenTofu, Pulumi, CloudFormation/Bicep |

| CD | deploy + promote | Argo CD / Flux (GitOps) (), Spinnaker, Harness, Octopus |

| Progressive delivery | reduce rollout risk | Argo Rollouts (), service mesh, feature flags |

| Observability | release confidence | OpenTelemetry (), Prometheus, Grafana, ELK/Loki |

| Security | pipeline + supply chain | OWASP CI/CD guidance (), NIST 800-204D (), SLSA () |

6) Common failure patterns (I see these repeatedly)

- “We want continuous deployment” but main isn’t releasable → long-lived branches, huge PRs, merge conflicts. Trunk-based fixes most of this. ()

- Pipelines are slow and flaky → engineers bypass them; quality collapses.

- No progressive delivery → every deploy is a big bang; fear grows; CD rolls back to “monthly release”.

- No observability gating → you deploy blind and notice failures via customers. OpenTelemetry-first approach helps standardize signals. ()

- Security bolted on late → attackers target CI/CD; supply chain risk spikes. Use OWASP + NIST guidance early. ()

7) Skill checklist (what to learn, in the right order)

If you’re a DevOps engineer aiming to be “continuous deployment strong,” here’s the order I’d follow:

- Git + trunk-based development fundamentals ()

- CI pipeline design (fast, repeatable, secure)

- Artifacts + versioning + registries

- IaC basics + environment parity

- Kubernetes deployment mechanics + rollout strategies ()

- GitOps (Argo CD or Flux) ()

- Progressive delivery (canary/blue-green/flags) ()

- Observability with OpenTelemetry + SLO thinking ()

- CI/CD security + supply chain maturity (NIST, OWASP, SLSA) ()

- DORA metrics + continuous improvement loop ()

8) A realistic maturity ladder (so you know where you are)

- Level 0: Manual deployments, tribal knowledge

- Level 1: CI + scripted deploys, basic rollback

- Level 2: Strong test gates + immutable artifacts + IaC parity

- Level 3: GitOps or structured CD control plane + progressive delivery

- Level 4: Automated promotion/rollback based on telemetry + measured improvements via DORA ()

Closing: the mindset that makes continuous deployment work

Tools matter—but the engineering habits matter more. Continuous deployment happens when teams treat the pipeline like production software, reduce risk with progressive delivery, validate with observability, and measure with DORA metrics. The moment you do those consistently, “deploying to production” stops feeling like an event and starts feeling like a routine. ()